How to Improve Critical Thinking Skills: A Practical Guide

How to Improve Critical Thinking Skills: A Practical Guide for IT Professionals

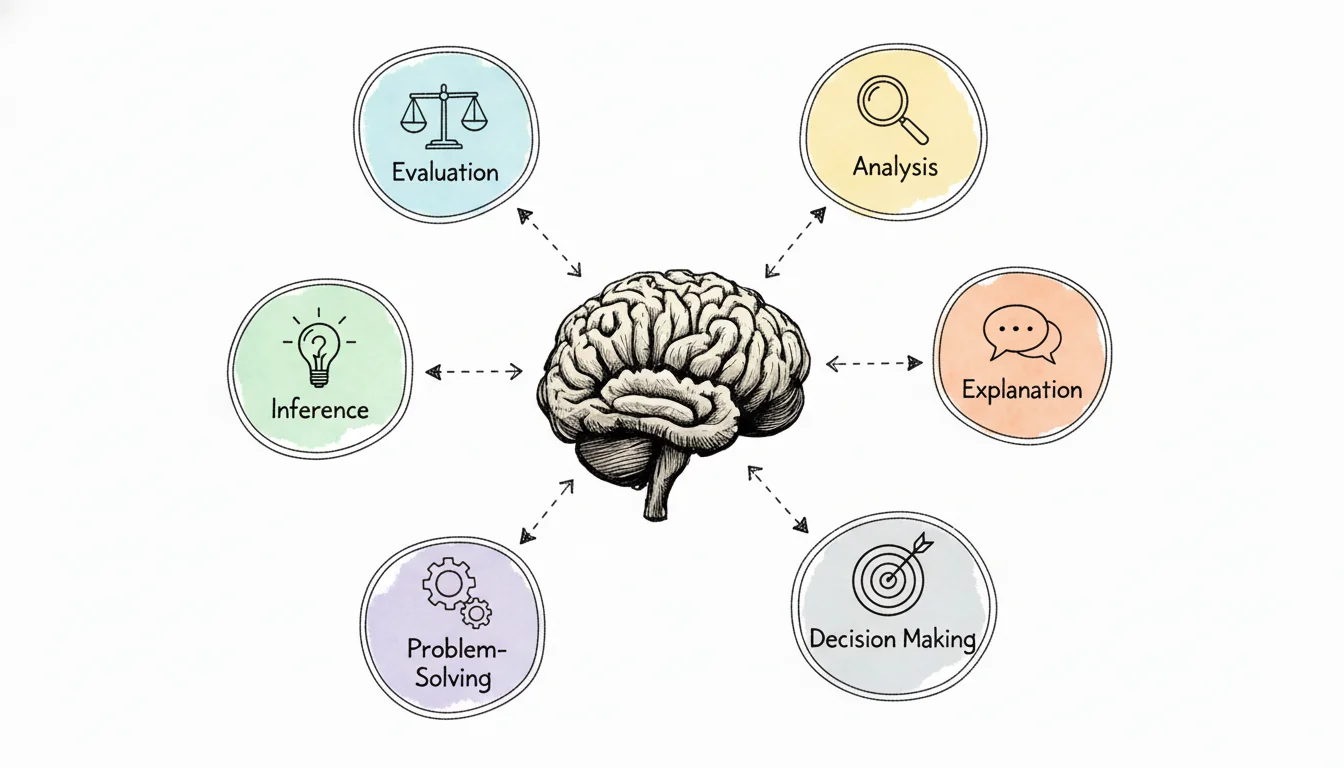

Critical thinking involves questioning assumptions, evaluating arguments, and reaching conclusions based on reason rather than accepting information at face value. For IT professionals, this is a deliberate process necessary for troubleshooting systems, designing resilient architectures, securing networks, and mastering certifications like AWS Solutions Architect, PMP, or ITIL. You must break down problems, identify biases, and apply structure to your thoughts. These skills help you succeed in the technical field. At MindMesh Academy, we view this analytical ability as the foundation of technical expertise. This systematic approach ensures you avoid reacting to technical issues and instead understand the underlying causes while developing effective solutions for your organization.

Why Is Critical Thinking So Hard To Develop?

Caption: Deep thought is often a deliberate act, especially when navigating complex IT challenges.

Caption: Deep thought is often a deliberate act, especially when navigating complex IT challenges.

Everyone agrees that critical thinking is vital, especially in IT where stakes are high and solutions are rarely straightforward. Why do so many of us struggle to apply it consistently? The obstacle isn't a lack of intelligence; it is a battle against mental habits that prioritize speed and simplicity over accuracy and depth. The fast pace of IT projects and emergency incident response often makes these habits worse.

Human biology favors efficiency. To manage the constant stream of data and decisions, our brains rely on mental shortcuts, known as cognitive biases, to reach fast conclusions. While these shortcuts help with routine tasks, they come at the cost of analytical depth. One major reason critical thinking feels unnatural is the influence of these biases. Consider confirmation bias—the habit of favoring information that supports existing beliefs. This might look like sticking with a familiar tech stack despite its flaws or ignoring data that suggests a favored solution is failing.

We’re Drowning in Information

Modern technical environments bombard us with information. We face a relentless stream of vendor whitepapers, tech news, and online tutorials for certification exam preparation. This volume encourages shallow processing. It is easier to react with a gut feeling than to evaluate logic. Professionals frequently scroll, skim, and share content without stopping to verify the source or the assumptions behind the data.

This environment rewards the brain for taking the easy path. It limits the patience needed for the detailed analysis required when comparing conflicting architectural designs or interpreting complex performance metrics. Thinking with depth takes significant effort. Many corporate cultures value quick answers over well-reasoned ones, resulting in rushed decisions and poor long-term outcomes for the infrastructure.

Our Education System Has a Gap

Much of the struggle stems from traditional education models. Many certification programs focus on memorizing specific facts to select a single "correct" answer. They teach people what to think instead of how to think. While you need factual recall for an IT certification, real-world troubleshooting and system design require a much higher level of application.

This approach leaves many IT professionals ill-equipped for the complex, ambiguous problems found in senior roles. These positions rarely offer textbook solutions; they require balancing trade-offs in environments where there is no single right answer.

The numbers confirm this difficulty. A 2020 survey from the REBOOT Foundation found that while 94% of people believe critical thinking is extremely important, a significant 86% believe the general public lacks this skill. Also, 60% of respondents reported they never formally studied the subject in school. These statistics explain why the skill feels underdeveloped in professional settings. Read more on the state of critical thinking.

The truth is, developing critical thinking is less about raw intelligence and more about adopting a structured process for analyzing the world. It is an active skill that requires consistent, conscious practice.

Recognizing these barriers is the first step toward overcoming them. While the struggle is common, it is solvable with the right frameworks—which the rest of this guide will provide.

Start by Questioning Everything—Especially Yourself

If you want to improve your critical thinking, you must learn to challenge what you think you know. Human brains are built for shortcuts. These mental models—often called assumptions or biases—help us manage daily life, but they often act as a barrier to objective thinking in technical environments.

The process begins by admitting that your immediate reaction might be wrong. When you receive a new project, see a security alert, or find an odd data point in a system log, it is natural to jump to a conclusion based on what happened last time. This is a survival mechanism to avoid feeling overwhelmed, but it is also where critical inquiry stops.

Get Familiar With Your Own Biases

Every person, from junior help desk staff to senior engineers, operates with cognitive biases. These are not character flaws. They are predictable bugs in our internal processing systems. To think better, you have to identify these patterns so you can correct them before making a major technical choice or answering a tricky question on a certification exam.

You have likely encountered these specific biases in your workplace:

- Confirmation Bias: You might have a preference for a specific cloud provider (e.g., AWS EC2) or a certain programming language. When you research options for a new deployment or study for a multi-cloud exam, you might only notice articles that support your favorite tool. You ignore negative reviews or better alternatives because they do not fit your existing narrative.

- Anchoring Effect: Imagine a vendor tells you a project will take 6 months. Later, you discover technical obstacles that mean the work will actually take 9 months. Because of that initial "anchor" of 6 months, you find it very hard to adjust your expectations. This leads to missed deadlines and poor planning because you are mentally stuck on the first number you heard.

- Availability Heuristic: Suppose you read news about a massive security breach at a competitor. You might start worrying about that specific type of attack constantly, even if it is unlikely to happen to your firm. You might even shift your study focus for a CISM or CISSP exam toward that one specific threat while ignoring more common risks that are more likely to appear on the test.

Being honest about these patterns is the hardest part. It requires you to admit that your first impression might be based on thin evidence. It takes actual effort to realize that your brain is trying to save energy by skipping the hard work of analysis.

Reflection Prompt: Which of these cognitive biases have you noticed in your own work? Think about a time a project or study session went wrong because you stuck to an assumption. What was the result?

Practical Ways to Break Down Your Assumptions

Knowing you have biases is one thing, but you need active ways to break them. You need a system to challenge your own logic.

One effective method is playing devil’s advocate against yourself. Before you commit to a specific architecture, a new software purchase, or a study plan, stop. Spend ten minutes building the strongest possible argument for the opposite choice. If you were your own rival, how would you tear your plan apart? This exercise forces you to see the gaps you were trying to ignore and makes your final decision much stronger.

You can also use the "Five Whys" technique, which comes from Toyota. You find the real source of a problem by asking "Why?" five times. This approach works well for IT troubleshooting and root cause analysis (RCA), which is a common practice in ITIL.

Consider a situation where an application is running slowly:

The Assumption: "Our new microservice deployment is slow because of inefficient code."

- Why is the microservice deployment slow? "Because API calls are timing out under load."

- Why are API calls timing out? "Because the database is overwhelmed during peak hours."

- Why is the database overwhelmed? "Because a batch job runs concurrently with user traffic, consuming all available I/O."

- Why does the batch job run during peak hours? "Because it was scheduled to minimize downtime for the previous monolithic application, a schedule that was never updated for the microservices."

- Why was the schedule not updated? "Because the deployment team wasn't fully aware of the batch job's impact on the shared database resource and no cross-functional review occurred."

The problem was not the new code. It was a failure in communication and legacy scheduling. This structured approach prevents you from wasting time fixing things that are not broken. It is a vital part of working in a DevOps or SRE environment. By refusing to accept the first answer, you find the actual issue.

Adopt Frameworks for Structured Thinking

Challenging your own assumptions is a vital first step. However, what comes next in the process? You need to provide your thinking with a reliable structure. The most effective critical thinkers do not just rely on raw intelligence; they use mental models to filter out noise and focus on what matters. These frameworks act as blueprints for handling messy, complicated problems. They help transform a jumble of data into a clear, repeatable process. This approach is very similar to how established architectural methodologies guide large-scale software deployments or infrastructure upgrades.

Without a structured framework, IT professionals often get lost in technical details. Worse, they might follow a gut feeling that lacks evidence, leading to expensive rework, missed project deadlines, or technical debt. A solid mental model forces you to maintain discipline. It ensures you examine an issue from every critical perspective before you commit to a specific path of action. By following a set process, you reduce the risk of letting personal bias or "shiny object syndrome" dictate your technical roadmap.

The RED Model: Your Go-To for Clearer Analysis

One of the most practical frameworks for technical professionals is the RED Model. It consists of a simple but powerful trio of actions: Recognize Assumptions, Evaluate Arguments, and Draw Conclusions. I have used this specific model to deconstruct many different problems, from choosing between cloud providers to diagnosing a major system outage that is impacting production users.

Consider a common scenario in a corporate environment: evaluating a new Security Information and Event Management (SIEM) system. It is easy to get caught up in the feature list provided by the vendor, but the RED model forces a more objective view.

- Recognize Assumptions: Start by identifying what you and your team are taking for granted. You must get these hidden beliefs out in the open. You might assume that "This SIEM will automatically detect all advanced persistent threats (APTs)." You might also believe that "Every analyst on the team will find its query language easy to learn," or that "The integration with our current identity management system will work without any custom coding." None of these are established facts yet; they are assumptions that need validation. If you assume the integration is simple and it turns out to require 200 hours of custom development, your project budget will be ruined.

- Evaluate Arguments: This is the phase where you play the role of a technical detective. You must examine the evidence behind every claim. Where did the statement that the tool "detects all APTs" come from? Was it a sales slide deck, or did it come from an independent technical report from a firm like Gartner or Forrester? You need to find proof, data points, and expert opinions that are independent of marketing materials. If a vendor claims their UI is easy to use, check if the security team has used similar tools. Look for objective evidence, such as the time it takes for a junior analyst to run a basic query during a trial period.

- Draw Conclusions: Once you have gathered and weighed the evidence, you can reach a reasoned decision. Instead of a simple "yes, we should buy this," your conclusion becomes a detailed business case. It might sound like this: "The SIEM shows potential for improving threat detection, but we must run a proof-of-concept (PoC) with a pilot team for two weeks. This will allow us to test our assumptions regarding the identity provider integration and determine the actual learning curve for the query language. We also need to calculate the specific cost of creating custom rules for our unique legacy applications."

This process shifts your decision-making from a subjective "I like this tool" to an objective strategy backed by a structured analytical approach. You move from reacting to data to genuinely analyzing it. This shift is also central to improving your analytical abilities in general. You can find more detail on this in our guide on how to develop problem-solving skills.

The infographic below offers several methods for putting this type of structured questioning into daily practice.

Caption: Actively challenging your thoughts and seeking evidence are key to sharpening critical thinking.

Caption: Actively challenging your thoughts and seeking evidence are key to sharpening critical thinking.

As the graphic illustrates, spotting bias, playing devil's advocate, and asking "why" repeatedly are the active components in seeing your own assumptions clearly. This disciplined questioning prevents you from accepting information at face value.

Finding the Right Framework for the Job

The RED Model works well for day-to-day decisions and evaluating new proposals, but different challenges often require different tools. To help you choose the right approach for your situation, I have put together a comparison of several popular frameworks. Each of these has specific strengths for those working in technical fields.

Comparing Critical Thinking Frameworks

This table breaks down three common models to help you select the one that fits your current IT challenge.

| Framework | Core Purpose | Best For | Key Question |

|---|---|---|---|

| The RED Model | Deconstructing arguments and assumptions to reach a reasoned decision. | Vetting new tools (such as cloud services or software), evaluating project proposals, or analyzing a single problem statement in a certification exam. | "What am I assuming to be true, and what is the actual evidence for it?" |

| First Principles Thinking | Breaking a complex problem down to its most basic, fundamental truths. | Radical innovation, rethinking a legacy system architecture from scratch, optimizing a core algorithm, or solving a brand-new engineering problem. | "What do I know is absolutely true, regardless of how we have done things in the past?" |

| The 5 Whys | Drilling down through layers of symptoms to find the root cause of a failure. | Troubleshooting bugs, diagnosing process failures in a CI/CD pipeline, understanding recurring incidents, or performing post-mortems for major outages. | "Why did this specific failure happen? And why did that underlying component fail?" |

Selecting the correct framework is a major part of the battle. If you are dealing with a recurring bug in a database, the 5 Whys will likely serve you better than First Principles Thinking. However, if you are designing a brand-new cloud architecture to replace a 20-year-old mainframe, First Principles will help you avoid carrying over old, inefficient habits. Use this table as a starting point to match the model to the specific problem. You will find that your thinking becomes sharper and more effective immediately. This allows you to handle complex technical challenges and certification questions with much more confidence.

Know Your Circle of Competence

There is another mental model that I use frequently, and it is especially relevant in the specialized world of technology: the Circle of Competence. This concept was popularized by investors Warren Buffett and Charlie Munger. The core idea is very simple. Every person has specific areas where they possess genuine, deep expertise. Surrounding that small circle are vast areas where they know very little, even if they have some general familiarity. Critical thinking requires you to know, with total honesty, where your expertise ends.

The most dangerous mistakes happen when we operate outside our circle of competence but believe we are still safely inside it. This is a common cause of failure in IT projects. For example, a senior network engineer might try to design a complex application security policy without consulting a security architect. Similarly, a developer might attempt to optimize database indexing and storage parameters without involving a DBA.

Consider a world-class AWS Solutions Architect. This person has a massive circle of competence regarding the design of scalable cloud solutions using S3, EC2, and Lambda. However, that high-level expertise does not mean they have deep knowledge of Enterprise Resource Planning (ERP) system integrations or the mathematics behind complex data science algorithms. The smart move is recognizing when you have reached your limit. At that point, you should bring in someone whose circle of competence covers that specific area. Alternatively, you might decide to expand your own circle by studying for a new certification.

This type of disciplined thinking is more than just a "nice-to-have" skill. A long-term study conducted by the Council for Aid to Education revealed a concerning trend. While most college graduates show some improvement in their critical thinking abilities, nearly half of them remain at the lowest proficiency levels. This data suggests that a degree alone is not enough to master these skills. To be successful in the technical world, where the stakes are high and the systems are complex, you need intentional and structured practice.

Frameworks like the RED Model and the Circle of Competence provide you with that necessary structure. They offer the focused practice required to build the mental muscles needed for better thinking and more informed decision-making throughout your career. By consistently applying these models, you ensure that your technical choices are based on logic and evidence rather than habit or unchecked assumptions.

Become an Active Information Consumer

Caption: Actively scrutinizing digital information is a vital skill for IT professionals navigating a sea of data and vendor claims.

Caption: Actively scrutinizing digital information is a vital skill for IT professionals navigating a sea of data and vendor claims.

Modern technical work involves managing a constant stream of information. You likely spend your day scanning vendor whitepapers, tech news sites, online forums, certification study guides, and troubleshooting blog posts. Most people fall into the trap of being a passive consumer. They scroll through feeds and absorb whatever the algorithm prioritizes without much thought. To improve your critical thinking, you must change how you interact with data. You need to become an active information consumer. This means you intentionally question, verify, and analyze every piece of technical advice or news you encounter, from a sudden security vulnerability report to a bold new architectural recommendation.

This change does not require you to spend hours fact-checking every single social media post. Instead, you need a reliable system to use when the information actually carries weight. You should use this system when you are evaluating a new tool for your stack, fixing a critical system failure, or researching complex topics for an Azure or PMP certification. One effective framework for this is the SIFT method. It provides a four-step process for vetting online information quickly to keep you from getting stuck in a cycle of misinformation.

Master the SIFT Method

Rather than getting distracted by a single questionable article or a lone forum post, the SIFT method encourages you to use the entire web to get a clearer picture of the truth. It is a practical way to cut through marketing noise and find the actual facts. This is particularly helpful when you need to distinguish between high-quality certification study materials and misleading "brain dumps" that could jeopardize your exam performance.

Here is how you can apply the process:

- Stop: Pause the moment you encounter a claim. Before you read a full article, accept a vendor's marketing promises, or share a link with your team, ask yourself if you recognize the website, author, or the publisher. Check your initial emotional reaction. If a headline makes you feel intense excitement or sudden outrage, it is usually a signal that the content was designed to provoke you rather than inform you. Use that feeling as a cue to slow down and apply more scrutiny.

- Investigate the Source: Avoid taking a website's "About Us" page at face value, as these are often written for marketing purposes. Open a new browser tab and conduct a quick search on the publication or the author. Determine if the source is a respected tech news outlet, a recognized industry analyst, a biased vendor-sponsored blog, or a personal site with no history of technical accuracy. See what other established experts or organizations say about their work before you trust their conclusions.

- Find Better Coverage: Look for additional reports on the same technical topic from different trusted sources or established technical communities. If you read a claim about a new AI feature in a cloud platform, checking multiple sources helps you decide if the claim is an outlier or if it represents a consensus among technical experts. Cross-referencing allows you to see the "average" truth rather than being swayed by one person's specific bias or misunderstanding.

- Trace Claims to the Original Context: When an article cites a specific study, quotes a developer, or refers to official documentation like an RFC or a cloud provider's technical whitepaper, you should find that original document. Secondary sources often simplify complex technical details or misinterpret the intent of the primary source. Seeing the original context is the only way to confirm technical specifications and ensure you have the correct information for a project or a certification exam answer.

Using this process transforms you from a passive reader into an active investigator. You will develop a mental filter that helps you identify credible technical information and discard low-quality content. This saves time and prevents you from making architectural or security mistakes based on bad data.

Critical thinking is not just about identifying fake news. It is about evaluating the strength of any argument, whether it appears in a business report, a marketing pitch for a new tool, or an official certification study guide.

Go Deeper Than Surface-Level Fact-Checking

Active consumption goes beyond basic verification. You must also evaluate how an argument is built. A major part of this involves learning to evaluate sources for research by identifying potential biases and the credibility of the writer. This skill is vital when you have to choose between two conflicting "best practices" for a new technology.

You must also watch for common logical fallacies that appear in technical reporting. For example, a report might claim that a new security patching tool led to a 20% jump in system uptime because it was installed right before the improvement occurred. Is that definitive proof that the tool worked? Or was the increase in uptime caused by a concurrent server upgrade, a decrease in user traffic, or a separate database optimization? The report might suggest the tool was the primary cause, but without evidence from a controlled test or A/B comparison, it is only a correlation. Mistaking correlation for causation is a frequent error in IT incident reviews and performance analysis.

Staying organized is the final piece of the puzzle. As you analyze different reports, keeping clear notes is the best way to synthesize what you have learned for a project or for exam preparation. If you need a more systematic approach, our guide on effective note-taking methods for tech certs in 2025 provides techniques to help you capture and connect different technical insights.

Here is a quick exercise: The next time you see an article making a major claim about a new technology or an industry trend, apply the SIFT method. Time yourself during the process. See how long it takes to find the primary source for a statistic. Check if that original source actually supports the article's conclusion or if it has been twisted to fit a specific narrative. Practicing this regularly builds the mental strength required for high-level critical thinking.

Build the Habits of a Great Thinker

*Caption: Watch this video to deepen your understanding of critical thinking's practical applications.*Frameworks and specific logic models offer a starting point, but high-level critical thinking is more of a consistent mindset than a toolset. It is a way of looking at your environment and your work. This mindset relies on a specific set of habits that dictate how you manage difficult problems or interpret fresh data. The most effective technical professionals I have encountered in IT do not just excel at logical deconstruction. They operate with two primary traits: constant curiosity and significant intellectual humility.

These habits are necessary because analytical skills are not something you can absorb through proximity alone. Research supports this reality. One major meta-analysis found that while many university students show growth in their thinking abilities, a significant one-third of them show no improvement at all during their studies. This figure highlights the risk of passive learning. To grow, you must be intentional about exercising these mental muscles. This is especially true in technical roles where systems change almost every year. Examine the specific data points in this full critical thinking research.

Cultivate Insatiable Curiosity

Curiosity serves as the motor for better thinking. It is the internal drive that forces you to ask why a system behaves in a certain way. Instead of accepting that a reboot fixed a problem, a curious thinker wants to know why the microservice hit a specific memory limit or why the error logs showed a particular timeout pattern. Effective technical problem-solvers usually keep learning even when they are off the clock. They look for information that seems unrelated to their daily tasks, knowing that broad knowledge often leads to creative solutions for difficult technical issues.

You can build this habit through several specific practices:

- Read Outside Your IT Field: If you work in cybersecurity, look into behavioral economics or psychology. Understanding why people take risks with their passwords is just as useful as understanding the encryption they are bypassing. If you work in DevOps, study industrial design or logistics. Seeing how physical factories manage flow can help you see your deployment pipelines from a new perspective. This creates a versatile mental toolkit and reveals connections that others often miss.

- Ask More Open-Ended Questions: Avoid questions that end in a simple yes or no. Instead of asking if a product launch was a success, ask what the most surprising results were. In a post-mortem, do not just ask if the service is back up. Ask what the incident revealed about the way the team handles pressure or what systemic weaknesses the bug exposed. These questions force people to think harder and provide more context, which leads to a better understanding of the root cause rather than just the symptoms.

- Seek Out Opposing Views: It is easy to look for information that confirms what you already think. Break this habit by looking for well-reasoned arguments that contradict your favorite architectural patterns or programming languages. Join peer reviews or architecture boards where colleagues are encouraged to find flaws in your logic. You do not have to change your mind every time, but you should understand the opposing side well enough to explain it. This makes your final decisions more defensible and your solutions more resilient.

Embrace Intellectual Humility

The second habit, intellectual humility, is the willingness to admit when you are wrong or when you do not have enough information to make a call. It is the confidence to say "I don't know" without worrying about your reputation. In an IT environment, this is a major advantage. It allows for faster learning and better collaboration. When you are willing to be wrong, you are also willing to find the actual best solution, even if that solution came from a junior team member or a competing department.

Innovators don't succeed because they are always right. They succeed because they are faster to recognize when they are wrong. Humility allows you to pivot without ego getting in the way, which is critical for agile development and rapid response in IT.

This humility changes how you process new information. When you are honest about the limits of your knowledge, you can focus your energy on what you still need to master. This is vital when you are preparing for a new certification or trying to keep up with a new cloud service. We discuss this further in our article on how to improve memory retention when learning complex technical subjects. A humble approach ensures you are building real knowledge that lasts, rather than just memorizing facts to get through a single exam.

Your Questions, Answered

Even after you have internalized these analytical frameworks, you will likely encounter specific challenges when applying these techniques to your daily technical work. Theory often clashes with the reality of tight deadlines and the pressure of system outages. We have gathered some of the most frequent questions from systems administrators, developers, and security analysts who are working to sharpen their technical judgment and move beyond basic troubleshooting.

How Can I Practice Critical Thinking Daily?

You do not need to block out large segments of your schedule. Improving your thought process works best when you integrate small habits into your existing technical workflow.

Choose one specific item each morning to analyze. This could be a technical blog post regarding a newly discovered vulnerability, a project update email from a manager, a pull request awaiting your review, or a request for a technical opinion in a team chat. Instead of just glancing at the code or the text, consider the broader implications of the information.

If you are evaluating a new cloud service or reading an article about a security patch, use the SIFT method to evaluate the information:

- Who is the author of this content? Are they a neutral researcher or a vendor representative with a specific sales goal?

- What is the primary technical argument being made? Does the author provide data to support their claims or rely on generalities?

- Are there any underlying assumptions or logical errors in the text, such as assuming that a solution for a small startup will work for an enterprise-level data center?

- Can you find another source, such as official documentation, an independent analyst report, or a reputable technical forum, that offers a different perspective or provides contradictory data?

Another effective habit is to take five minutes at the end of your shift to reflect on a specific technical choice you made during the day. Ask yourself: "What evidence supported my decision to use this specific automation script instead of a manual fix?" or "Did I choose this software library because it was truly the best fit for the project, or simply because I was already familiar with its syntax?" Consistency is what builds mental strength. If you do this every day, the process of questioning your own assumptions becomes automatic rather than forced.

What Is the Biggest Mistake People Make?

The most frequent error is treating analytical skills as a theoretical subject for a certification exam rather than a practical tool for the server room or the development environment. Many professionals read about logic and bias but fail to use those concepts when a production environment goes down or when they are designing a new network topology. This creates a gap between what they know and how they actually perform under pressure.

Thinking is for action. If you do not use these methods to solve real-world problems, evaluate vendor claims, or challenge the status quo in your infrastructure, the knowledge remains stagnant.

Move from understanding a concept to performing it. When you are in a team meeting discussing a cloud migration, do not just accept the proposed timeline. Ask questions that reveal the underlying technical dependencies. Point out potential single points of failure in a proposed architecture. If you see a colleague making a decision based on a gut feeling rather than available log data, suggest a more evidence-based approach. Without this active application, your skills will not improve, and your technical growth will eventually stall.

How Do I Know If My Skills Are Improving?

Growth is measured by changes in your professional output and the reliability of the systems you manage. You will see the results in the quality of your troubleshooting and the strength of your technical designs.

Watch for these indicators:

- You start asking questions that address root causes rather than symptoms. In an incident post-mortem, you might ask why a specific monitoring alert failed to trigger rather than just asking how to restart the service that crashed.

- You identify logical gaps or marketing rhetoric in vendor presentations or internal proposals. You might notice when a salesperson uses an "appeal to authority" instead of providing raw performance metrics or latency figures.

- Your technical decisions are easier to justify to management because you have documented the evidence, considered the long-term risks, and analyzed at least two viable alternative solutions.

- You stop "guessing" which command might fix a problem and start following a logical path based on system telemetry, network logs, and error messages.

- You perform better on application-based questions in certification exams. For example, in the current CompTIA A+ (220-1201/220-1202) or the AWS Certified Cloud Practitioner (CLF-C02), you can better analyze which solution fits a specific business scenario rather than just identifying the name of a specific cloud service.

A practical way to track your progress is to maintain a decision journal. When you make a significant technical choice—like picking a container orchestration strategy, prioritizing features in a sprint, or setting a specific firewall policy—write down the date, the problem, the options you considered, and why you chose one over the others. List the assumptions you are making at that moment. Revisit these entries after a month to see if your reasoning was sound and if your assumptions held up. This provides concrete proof of your growth and helps you see patterns in your technical judgment over time.

At MindMesh Academy, we believe that structured practice distinguishes experts from beginners. Our study methods focus on helping you understand the underlying mechanics of technology so you can apply logic to complex problems and pass your certifications with confidence. If you want to advance your career with study materials built for practical success, visit our site. Explore IT Certification Practice Exams.

Written by

Alvin Varughese

Founder, MindMesh Academy

Alvin Varughese is the founder of MindMesh Academy and holds 18 professional certifications including AWS Solutions Architect Professional, Azure DevOps Engineer Expert, and ITIL 4. He's held senior engineering and architecture roles at Humana (Fortune 50) and GE Appliances. He built MindMesh Academy to share the study methods and first-principles approach that helped him pass each exam.