What is cloud native application? A Complete Guide to Modern Apps

What is Cloud-Native Application? A Comprehensive Guide for IT Professionals

In today's fast-paced digital landscape, IT professionals are constantly challenged to build and manage applications that are resilient, scalable, and responsive to ever-changing business demands. This is where the concept of "cloud-native" application development becomes indispensable. At MindMesh Academy, we believe in equipping you with the foundational knowledge to not only understand these concepts but to apply them effectively in your career and ace your certification exams.

A cloud-native application is fundamentally different from traditional software. It’s a piece of software meticulously architected from day one to fully harness the elasticity, resilience, and vast scale of cloud computing environments. It’s not merely about running an existing application on a cloud server; it’s about designing it to intrinsically "speak the language" of the cloud.

Consider the analogy of building a boat. A traditional application moved to the cloud (a "lift-and-shift") is like taking a river barge designed for calm waters and putting it into the open ocean. It might float, but it wasn't built for the storms, currents, and vastness. A cloud-native application, on the other hand, is purpose-built in a shipyard for the open sea—it’s robust, adaptable, and engineered to thrive in unpredictable conditions, making full use of advanced navigation and stability systems. This deep integration with cloud characteristics is precisely what makes cloud-native so transformative.

What Really Makes an Application “Cloud Native”?

To truly grasp cloud-native, we need to look beyond the hype and understand it as a strategic paradigm for software development and operations. It's a mindset that prioritizes agility, efficiency, and robustness.

Traditional applications are often constructed as monolithic systems, resembling a towering, interconnected Jenga block structure. If you need to modify a small piece at the base, the entire structure can become unstable, risking a collapse. Cloud-native architecture, by contrast, deconstructs this tower into individual, independent Lego blocks. Each block (or service) can be developed, swapped, scaled, or upgraded without impacting the others.

This architectural philosophy is a catalyst for speed and agility. It empowers separate development teams to work on their specific "Lego blocks" concurrently, accelerating update cycles and significantly reducing the inherent risks associated with large, infrequent releases. For IT professionals preparing for certifications like AWS Certified Solutions Architect or Azure Solutions Architect Expert, understanding this fundamental shift from monolith to microservices is crucial for designing modern, scalable systems.

The Guiding Principles for Cloud-Native Excellence

Building for the cloud demands embracing a distinct set of core principles that diverge significantly from conventional software development practices:

- Built for Change (Agility): Cloud-native applications are designed for constant, small, low-risk updates and iterative improvements, rather than large, high-stakes releases every few months. This agile approach facilitates continuous evolution and rapid response to market feedback.

- Automation is King (Efficiency): Manual processes are replaced with comprehensive automation. This includes everything from code compilation and automated testing to seamless deployment and infrastructure provisioning through Continuous Integration/Continuous Delivery (CI/CD) pipelines. This principle is central to DevOps culture, a key domain in certifications like the AWS Certified DevOps Engineer or Microsoft Certified: Azure DevOps Engineer Expert.

- Resilience by Design (Reliability): The system anticipates failures and is engineered to automatically recover and self-heal. It can also dynamically scale resources up or down in response to real-time user demand or fluctuating workloads, ensuring consistent performance and availability.

Reflection Prompt: Think about a traditional application you've worked with. How would applying these three guiding principles — "Built for Change," "Automation is King," and "Resilience by Design" — transform its development, deployment, and operational lifecycle? Consider the challenges and benefits for your team and the end-users.

More Than Just "Lift-and-Shift"

Many organizations initiate their cloud adoption journey by simply migrating existing applications onto cloud virtual machines—a common strategy known as "lift-and-shift." While this offers some benefits (like reducing data center costs), it largely overlooks the transformative potential of cloud computing. These applications are "cloud-hosted" but not genuinely cloud-native.

To fully leverage the cloud's capabilities, a fundamental re-evaluation of the application's design is required. This often entails re-architecting it to align with modern cloud computing architecture patterns.

This shift isn't just a technological trend; it represents a significant market evolution. In 2024, the global market for cloud-native applications surged to an estimated $22 billion, clearly indicating organizations' increasing reliance on this approach for competitive advantage. Further insights into this market can be found at Consainsights.

The following table highlights the critical distinctions between these application approaches, a common topic in certification exams that test your ability to differentiate cloud strategies.

Cloud Native vs. Traditional Applications At A Glance

| Attribute | Cloud Native | Cloud-Ready (Lift-and-Shift) | Traditional Monolith |

|---|---|---|---|

| Architecture | Microservices, serverless, event-driven | Monolithic, N-tier (VM-based) | Monolithic, tightly coupled |

| Deployment | CI/CD pipelines, containers, immutable | Manual or semi-automated VM deployments | Infrequent, manual deployments |

| Scalability | Horizontal (auto-scaling), elastic | Manual or limited auto-scaling (VMs) | Vertical scaling, often manual |

| Resilience | Designed for failure, self-healing, fault-tolerant | Relies on infrastructure redundancy (VM HA) | Single point of failure, high downtime risk |

| Infrastructure | Immutable, Infrastructure as Code (IaC) | Mutable, manually configured VMs | Static, on-premises servers |

| Development | Agile, DevOps culture, small autonomous teams | Traditional or Agile | Waterfall, siloed teams |

As illustrated, the differences extend far beyond the hosting environment. Cloud-native signifies a holistic overhaul of how we conceptualize, build, deploy, and manage software for the modern digital era. For certification candidates, understanding these distinctions is paramount for selecting appropriate architectural patterns and deployment models.

Understanding the Pillars of Cloud-Native Architecture

To truly grasp the essence of a cloud-native application, it's essential to delve into its architectural DNA. This approach is built upon several core principles—or pillars—that collectively create systems capable of handling immense demands. These systems are inherently resilient, scale dynamically, and are designed for continuous adaptation and change.

Consider these pillars not as isolated components, but as the integrated foundational supports of a modern software skyscraper. Each pillar directly addresses and overcomes limitations inherent in traditional software development, replacing slow, rigid processes with dynamic, automated alternatives. Let's explore the five key pillars that define this architectural style.

Microservices: The Power of Specialization

The foundational pillar is the microservices architecture. Instead of constructing a single, massive, monolithic application where every feature is intricately intertwined, the system is broken down into a collection of small, independent services. Each service performs a specific function and excels at it.

Imagine a bustling restaurant kitchen. The traditional, monolithic approach is akin to having one overworked head chef responsible for every single dish on the menu—from appetizers to desserts. This single chef becomes a severe bottleneck; if they encounter a challenge with a complex sauce, the entire kitchen's output can halt.

A microservices approach mirrors a team of specialist chefs. One chef handles grilling, another is the master of sauces, and a third focuses exclusively on pastries. Each is an expert in their domain and operates autonomously. If the dessert station faces an issue, it doesn't disrupt the preparation and delivery of main courses. This clear separation of concerns significantly enhances efficiency and resilience, allowing teams to develop, deploy, and scale their specific services without impacting others. This modularity is a core concept in the AWS Certified Developer and Microsoft Certified: Azure Developer certifications.

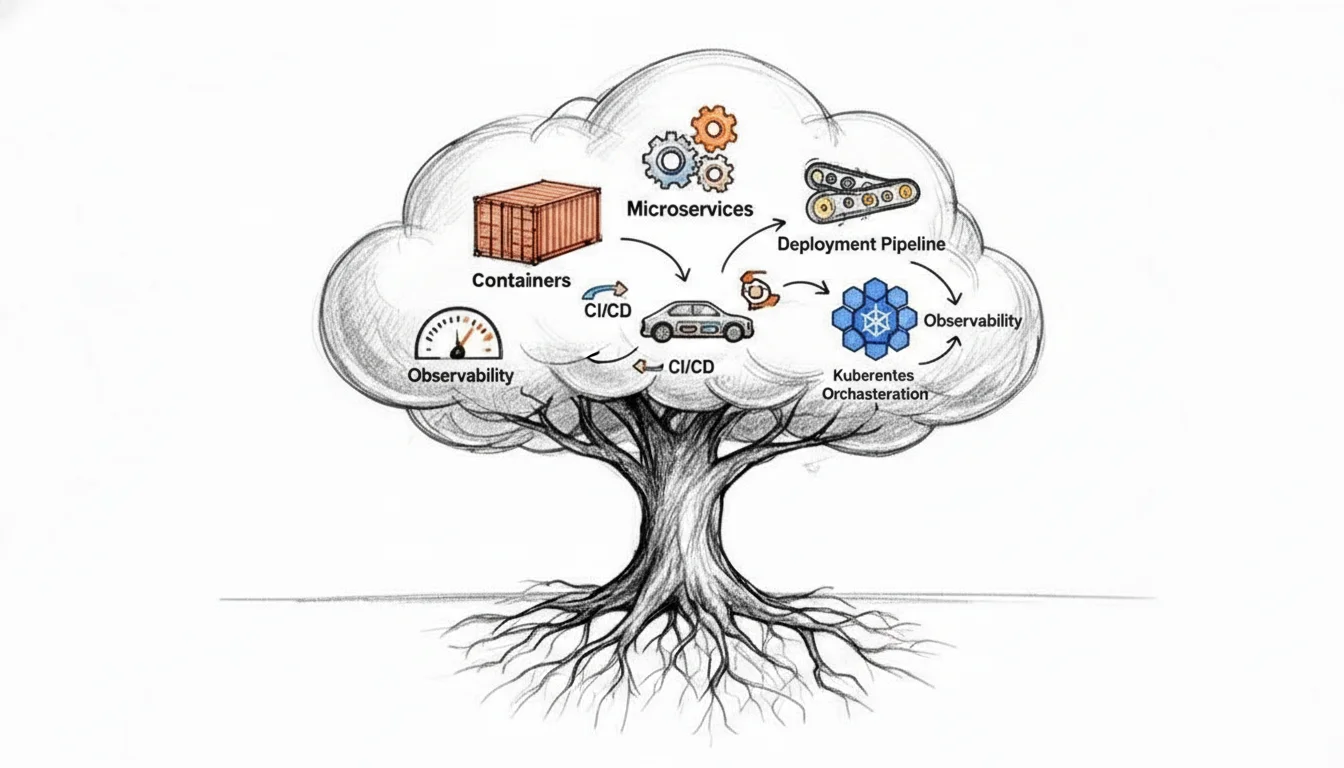

The diagram below visually represents this evolution, showcasing the clear progression from traditional to cloud-native application architectures.

As depicted, adopting a cloud-native approach isn't merely about migrating to the cloud; it's a fundamental structural transformation, moving from a rigid, centralized entity to a flexible, distributed ecosystem.

Containers: The Universal Shipping Box

With specialized microservices designed, the next challenge is to standardize their packaging and ensure consistent execution across diverse environments. This is where containerization, our second pillar, comes into play. Docker stands as the industry leader in this domain.

Visualize a container as a standardized shipping container for your software. It meticulously bundles an application's code with all its essential dependencies—libraries, system tools, and runtime environments—into a single, lightweight, and self-contained package. This packaging guarantees that your application will behave identically, regardless of where it is deployed, eliminating common "it worked on my machine" issues.

A container fundamentally resolves the long-standing developer dilemma of "it worked on my machine." By encapsulating every dependency, it ensures absolute consistency from a developer's local machine, through various testing stages, and into production servers. This consistency is invaluable for reliable deployments.

This inherent portability is a game-changer. It eradicates frustrating environment-specific bugs and streamlines the entire deployment process, making it faster, more predictable, and significantly more reliable—a vital concept for anyone taking the Certified Kubernetes Administrator (CKA) or any cloud platform certification.

CI/CD: The Automated Software Factory

Managing updates for dozens, or even hundreds, of microservices manually would be an insurmountable task. This brings us to our third pillar: Continuous Integration and Continuous Delivery (CI/CD). This represents the automated assembly line for your software development and delivery.

- Continuous Integration (CI): This practice mandates developers frequently merge their code changes into a central repository. Each merge automatically triggers a build process along with a comprehensive suite of automated tests. This proactive approach helps identify and rectify integration issues early and frequently.

- Continuous Delivery (CD): Picking up from CI, this practice automatically prepares successfully tested code for release. The ultimate goal is to enable rapid, safe, and sustainable deployment of new features and updates to customers.

This automated pipeline revolutionizes development velocity. Gone are the days of infrequent, high-risk releases. With CI/CD, teams can confidently push small, incremental updates multiple times a day, allowing them to respond to market demands in near real-time. This is a core competency for DevOps roles and certifications.

Immutable Infrastructure: The Disposable and Replaceable Server

Our fourth pillar introduces a radical paradigm shift in server management. With immutable infrastructure, a server is never modified after its initial deployment. If an update, patch, or configuration fix is required, you do not log into the live server to make changes. Instead, a brand new server is provisioned from a fresh, updated image, deployed to replace the old one, which is then decommissioned and destroyed.

This approach is analogous to replacing a faulty lightbulb rather than attempting to repair it while it's still active. It's inherently safer and more predictable. Immutable infrastructure completely eradicates "configuration drift"—those subtle, undocumented changes that accumulate over time and lead to mysterious bugs. It dramatically enhances system stability and reliability, especially when managed with Infrastructure as Code (IaC) tools like Terraform or CloudFormation. To understand how this fits into the broader cloud landscape, it’s beneficial to review the differences between IaaS, PaaS, and SaaS.

Observability: The Advanced System Dashboard

Finally, in a complex, distributed system of interdependent microservices, gaining insight into its internal workings is paramount. This is where our fifth pillar, observability, becomes critical. Observability is the capability to infer the internal state of a system by examining its external outputs, allowing you to ask arbitrary questions about your system’s behavior without needing to pre-define every metric.

Traditional monitoring is often limited, similar to a car's "check engine" light—it signals a problem but provides minimal context. Observability, conversely, is like a modern car's comprehensive digital dashboard combined with a full diagnostic computer. It provides rich telemetry through three primary data types:

- Logs: Detailed, timestamped records of events and actions occurring within a service.

- Metrics: Time-series numerical data points that quantify system performance (e.g., CPU utilization, memory usage, request latency).

- Traces: A complete record of a single request's journey as it traverses multiple microservices, detailing each hop and its duration.

By collecting and analyzing this consolidated data, teams gain unprecedented insights into their system's actual behavior. This enables precise debugging, proactive identification of performance bottlenecks, and a holistic understanding of system health that was virtually impossible with traditional monolithic applications. This is a crucial area for any IT professional involved in operations, SRE, or monitoring.

Exploring Essential Cloud-Native Tools

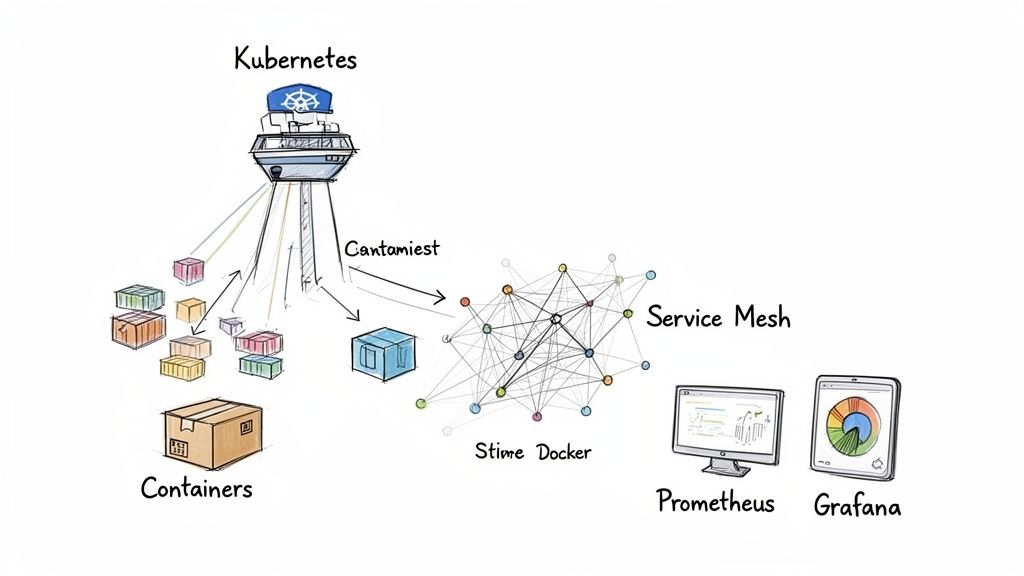

While understanding the theoretical underpinnings of cloud-native architecture is essential, practical implementation requires familiarity with the right toolset. The cloud-native ecosystem is vast and can initially appear daunting, but a core set of technologies forms the bedrock of most modern stacks. These tools are designed to interoperate seamlessly, much like a well-coordinated pit crew maintaining a high-performance race car.

Let's extend that race car analogy. To build one, you need the engine (your application logic), a chassis (the deployment environment), a sophisticated communication system (for internal components), and a detailed dashboard (for monitoring). The tools discussed below provide these precise functionalities, transforming abstract architectural principles into tangible, operational software.

Docker for Containerization

The journey often begins with Docker, the technology that democratized containerization. Think of Docker as the ultimate standardized packaging system for your microservices. It meticulously bundles your application's code along with every necessary dependency—libraries, system tools, and runtime environments—into a self-contained, portable unit called a container.

This elegantly simple concept effectively resolves the age-old developer conundrum: "It worked on my machine." By creating a consistent, isolated package, Docker guarantees that an application will execute identically across all environments, from a developer's laptop to a large-scale production cluster. This consistency is the foundational element for reliable CI/CD pipelines and plays a significant role in eliminating environment-specific bugs, a key concept for any cloud certification.

Kubernetes for Orchestration

Once your applications are packaged into Docker containers, the challenge shifts to managing potentially hundreds or thousands of these containers at scale. This is where Kubernetes (K8s) enters the picture as the undisputed leader in container orchestration. If Docker provides the shipping box for your app, Kubernetes is the advanced robotic warehouse and logistics system that manages all those boxes with incredible precision and scale.

Kubernetes functions as an intelligent air traffic controller for your containers, autonomously handling complex operational tasks:

- Scheduling: It intelligently determines the optimal server (node) within your cluster to run each container based on resource availability and constraints.

- Self-healing: If a container crashes, Kubernetes automatically restarts it. Should an entire server fail, it seamlessly migrates its containers to a healthy one, often without requiring human intervention.

- Scaling: It can dynamically adjust the number of running containers (horizontally scale) based on real-time traffic or predefined metrics, ensuring optimal performance and resource utilization.

- Service Discovery: It provides a robust mechanism for containers to locate and communicate with each other, even as they are dynamically created, moved, or destroyed within the cluster.

The modern cloud-native landscape truly gained momentum in 2013 when Google open-sourced Kubernetes. This pivotal event directly led to the establishment of the Cloud Native Computing Foundation (CNCF) in 2015, which now shepherds over 20 essential open-source projects. Mastering this stack is imperative for IT professionals working in hybrid or multi-cloud environments, and is heavily tested in certifications like the Certified Kubernetes Administrator (CKA) or Certified Kubernetes Application Developer (CKAD). You can gain further insights into the industry's direction by exploring the latest market research on cloud-native trends.

The CNCF landscape provides a comprehensive overview of the vast array of available tools, categorized by their function—ranging from databases and messaging to security and observability.

Service Mesh for Microservice Communication

In a microservices architecture, rather than a single large application, you manage dozens or even hundreds of smaller, interconnected services communicating with each other. Manually configuring and managing this intricate web of inter-service communication is prone to error and complexity. This challenge is precisely what a service mesh solution, such as Istio or Linkerd, is designed to address.

A service mesh is a dedicated, configurable infrastructure layer woven directly into your application environment. It provides a robust framework to control how all your microservices interact, externalizing complex networking concerns from your application code.

Think of a service mesh as the sophisticated central nervous system for your distributed application. It transparently handles all the intricate routing, security policies, and observability between services, liberating developers to concentrate solely on building business-critical features.

It injects critical capabilities like automated traffic encryption (mTLS), intelligent request routing for A/B testing or canary deployments, and resilience patterns such as circuit breakers—all without requiring any modifications to your application's code. This concept is increasingly relevant in advanced cloud architecture and DevOps certifications.

Prometheus and Grafana for Observability

With numerous interconnected components, how do you gain actionable insight into the operational state of your system? This is where observability shines, and the industry-standard open-source duo for this purpose is Prometheus and Grafana.

- Prometheus is a powerful monitoring system and time-series database. It actively "scrapes" metrics—key numerical data points—from your applications and underlying infrastructure at predefined intervals, constructing a detailed historical record of your system's health and performance.

- Grafana serves as the visualization and dashboarding layer. It connects to Prometheus (and a multitude of other data sources) enabling you to construct compelling, interactive dashboards replete with graphs, charts, and alert configurations. It transforms raw metric data from Prometheus into actionable insights.

Together, these tools function as the flight recorder and instrument panel for your cloud-native application. They empower teams to diagnose issues with surgical precision, identify performance trends, and maintain the smooth operation of complex, distributed systems. Understanding these tools is essential for any IT professional pursuing an SRE or DevOps certification.

Key Cloud-Native Technologies and Their Roles

To consolidate this information, here’s a quick-reference table summarizing the core tools and their functions within a typical cloud-native architecture. This table is an excellent study aid for understanding the ecosystem components.

| Tool/Platform | Category | Primary Function |

|---|---|---|

| Docker | Containerization | Packages an application and its dependencies into a portable container. |

| Kubernetes | Orchestration | Manages the lifecycle of containers at scale (scheduling, scaling, healing). |

| Istio | Service Mesh | Controls and secures communication between microservices; adds advanced traffic management. |

| Prometheus | Monitoring & Alerting | Collects and stores time-series metrics from applications and infrastructure. |

| Grafana | Visualization | Creates interactive dashboards and graphs to visualize metrics data. |

| Envoy | Service Proxy | A high-performance proxy that powers many service mesh implementations. |

While not exhaustive, this table covers the absolute essentials. Mastering how these components integrate and function together is the cornerstone of building and operating truly robust cloud-native systems, a skill highly valued in the IT industry.

Learning From Real-World Use Cases

Theoretical understanding is foundational, but to truly internalize the power of cloud-native, examining its real-world application is paramount. The true ingenuity of these architectural principles becomes apparent when they are deployed to solve complex business challenges for organizations we interact with daily. Let's explore how some leading tech companies leverage this approach.

Analyzing these examples in action makes abstract concepts like high availability, fault tolerance, and dynamic scalability concrete. They bridge the gap between architectural theory and practical outcomes, providing the kind of applied knowledge crucial for success in certification exams and real-world projects.

Safely Modernizing With the Strangler Fig Pattern

One of the most formidable challenges for established enterprises is migrating off entrenched, monolithic legacy systems without causing significant business disruption. The Strangler Fig Pattern offers an ingenious, low-risk strategy for precisely this scenario.

Envision your legacy application as an old, robust tree. A strangler fig vine begins as a small plant, gradually growing its roots around the host tree's trunk. Over time, the vine strengthens and becomes self-sufficient. Eventually, the original tree inside may wither away, leaving a new, healthy fig tree in its place.

This metaphor perfectly illustrates the software pattern. Instead of a risky, "big bang" rewrite, you incrementally build new cloud-native microservices around the periphery of the monolith. Piece by piece, you redirect user traffic and delegate functionality from the old system to these new services. This allows for controlled, iterative modernization. Many companies undertaking legacy system modernisation projects adopt this method to mitigate risk while achieving steady progress, a common strategy discussed in enterprise architecture and cloud migration certifications.

Key Takeaway for Certification: The Strangler Fig Pattern is a powerful, low-risk architectural pattern for incremental migration. It enables organizations to deliver value quickly with new, modern services while safely and methodically phasing out the legacy monolith in the background. Be ready to identify scenarios where this pattern would be most appropriate.

Netflix: Mastering Scale and Resilience

When considering applications operating at an almost unfathomable scale, Netflix invariably comes to mind. The streaming giant serves as a prime exemplar of a cloud-native architecture meticulously engineered for flawless performance under extreme pressure, simultaneously catering to millions of users worldwide.

Netflix's entire platform is constructed upon a microservices architecture hosted on AWS. Every distinct function—from user authentication, personalized recommendation generation, to the actual video streaming—is an independent service. This clear separation is pivotal: it ensures that an issue with the recommendation engine will not impede a user's ability to stream a movie.

To guarantee this exceptional level of resilience, Netflix famously developed the Simian Army, a suite of tools designed to intentionally inject failures into their live production environment. Its most renowned member, Chaos Monkey, randomly shuts down services to rigorously test the system's ability to automatically recover without users noticing any disruption. This philosophy of proactively embracing and testing for failure is a cornerstone of cloud-native thinking, directly relating to the reliability pillar of the AWS Well-Architected Framework.

Spotify: Accelerating Feature Development

Spotify presents another compelling real-world example. With a colossal user base and a relentless demand for new features, they required a methodology that would allow hundreds of developers to rapidly ship updates without impeding each other.

Their solution involved organizing into autonomous, cross-functional teams known as "Squads." Each Squad assumes full ownership of a specific segment of the application, from conception to operation. By building their platform on a microservices architecture, each Squad can develop, test, and deploy its services on an independent schedule, fostering true agility.

This organizational and technical model delivers substantial benefits:

- Speed: A new feature, such as the "Discover Weekly" playlist, can be conceived, developed, and rolled out by a single, focused team with minimal dependencies.

- Autonomy: Squads are empowered to select the most appropriate tools and technologies for their specific tasks, avoiding bottlenecks from centralized approval processes.

- Scalability: If the music search function experiences a sudden surge in traffic, only that particular service needs to be scaled up, rather than the entire application, optimizing resource allocation.

These case studies from Netflix and Spotify unequivocally demonstrate that embracing cloud-native principles is not merely a technical decision but a powerful business strategy. This is why large enterprises are increasingly adopting this approach, capturing the majority market share in 2024. Microservices enable 10x faster deployments and dynamic resource allocation that can lead to significant cost reductions, often in the range of 30-50%. For further data on these enterprise trends and their market impact, consider reviewing the latest market analysis from Spherical Insights.

Reflection Prompt: Consider a project you've been involved with. How could adopting the microservices approach, like Netflix or Spotify, have improved development speed, team autonomy, or system resilience? What challenges might you have encountered in such a transition?

Weighing the Benefits and Challenges

Embarking on a journey towards cloud-native architecture represents a significant strategic undertaking, far beyond a simple technological upgrade. While it unlocks an impressive array of capabilities, it also introduces a new layer of complexities. Achieving the right balance is crucial, especially when tackling nuanced certification questions that test your comprehension of these real-world trade-offs.

The most compelling driver for adopting cloud-native is the unparalleled gain in speed and agility. It enables organizations to build, test, and deploy software at a velocity that is simply unachievable with traditional monolithic applications. This isn't merely an incremental improvement; it's a fundamental paradigm shift in how quickly ideas can be translated into tangible user experiences, providing a formidable competitive advantage.

For anyone studying for a cloud or DevOps certification, internalizing these benefits is critical. Cloud-native practices can slash deployment times from weeks to mere minutes. Industry benchmarks even suggest a staggering 200% boost in developer productivity. With the cloud-native market projected to reach $17 billion by 2028 and US projections nearing $45.3 billion in 2026, a solid grasp of these concepts is non-negotiable for career advancement. A detailed breakdown of these projections is available on Grand View Research.

The Clear Advantages of Going Cloud-Native

Beyond raw speed, the advantages extend to building systems that are inherently more resilient, cost-effective, and efficient.

- Enhanced Scalability: Cloud-native applications are designed to automatically scale their resources up or down in response to real-time traffic fluctuations. This elasticity ensures you're not overpaying for idle server capacity but can seamlessly handle massive traffic spikes without performance degradation. This is a common design pattern tested in all major cloud provider certifications (AWS, Azure, GCP).

- Improved Resilience: Cloud-native systems are architected with failure in mind. Should a single microservice or container encounter an issue, the system intelligently isolates the problem and self-heals, often without any end-user awareness. This contrasts sharply with fragile monolithic applications where a single bug can lead to widespread system outages.

- Faster Deployment Cycles: Automated CI/CD pipelines facilitate frequent, small, and low-risk updates to production—often multiple times a day. This creates a rapid feedback loop, accelerating innovation and enabling quicker bug fixes.

- Reduced Vendor Lock-In: By building upon open-source standards like containers (Docker) and orchestration platforms (Kubernetes), you gain significant flexibility. This makes it considerably easier to migrate applications between different cloud providers or even back to on-premises environments, offering strategic independence.

A practical method for demonstrating these benefits involves tracking performance metrics. Many teams utilize industry-standard benchmarks such as DORA metrics to quantitatively measure improvements across deployment frequency, lead time for changes, change failure rate, and mean time to recovery.

Navigating the Inherent Challenges

Naturally, this transformative journey is not without its hurdles. Adopting a cloud-native approach comes with a distinct set of challenges that demand meticulous planning and, critically, a significant shift in organizational culture.

Critical Insight for IT Leaders: Adopting cloud-native is as much about transforming your team's mindset and fostering a new culture as it is about implementing new technology. It necessitates a culture of deep collaboration, shared ownership, continuous learning, and embracing experimentation, which is frequently the most difficult aspect of the transition.

Here are the primary obstacles you're likely to encounter, often explored in strategic and architectural certification exams:

- Increased Architectural Complexity: Managing hundreds of distributed microservices is inherently more complex than overseeing a single monolith. This demands new tools and specialized skills to handle aspects like service discovery, inter-service networking, distributed tracing, and holistic system monitoring.

- The Need for a DevOps Culture: Cloud-native principles and a robust DevOps culture are inextricably linked. The approach is ineffective if development and operations teams remain in traditional silos. Seamless collaboration, shared responsibility, and automated processes are essential for sustaining agility.

- New Skill Sets Required: Your team will need to acquire proficiency with an entirely new suite of tools and concepts. This includes expertise in containerization (Docker), container orchestration (Kubernetes), Infrastructure as Code (IaC), and modern observability platforms. This necessitates a substantial investment in training, upskilling existing staff, or strategic hiring.

- Enhanced Security Concerns: A distributed system presents a significantly larger attack surface compared to a monolithic application. Securing the communication pathways between numerous services, managing sensitive secrets, and hardening containers requires a modern, proactive, and often automated approach to security—a concept crucial for security certifications like the (ISC)² CCSP or AWS Certified Security - Specialty.

Getting Ready for Your Certification Exam

Understanding the theory is one thing, but approaching a certification exam with genuine confidence is another. To succeed, you need a structured plan that zeroes in on the topics most frequently tested. This involves connecting abstract concepts, engaging in hands-on practice, and following a clear study trajectory.

Consider this section your practical roadmap. We'll streamline your preparation and guide you toward mastering cloud-native concepts for your certification.

First and foremost, you must thoroughly grasp the core principles. Exam questions rarely demand simple definitions; instead, they challenge you to link concepts like microservices and containers to tangible business outcomes such as improved scalability or enhanced resilience. Industry data reinforces this focus: today, 75% of new applications are cloud-native, a dramatic increase from less than 20% just five years ago. This monumental shift, driven by the demand for features like auto-scaling and fault tolerance, is precisely why these topics are central to your certification exam. You can explore the detailed data in the full market report from Grand View Research.

Exam-Relevant Key Takeaways

To build a strong foundation for tackling complex scenario-based questions, engrain these concepts. They appear consistently across various cloud and DevOps certifications.

- Microservices vs. Monoliths: Be able to articulate the fundamental trade-offs. Microservices offer team autonomy and faster deployments but introduce distributed system complexity. Monoliths are simpler initially but become challenging to update and scale.

- Containers and Orchestration: Clearly differentiate their roles: Docker packages the application, while Kubernetes manages and orchestrates it at scale. Expect questions on Kubernetes components like Pods, Services, Deployments, and ReplicaSets.

- CI/CD Automation: Recognize CI/CD as the engine driving cloud-native development. Understand its direct impact on accelerating release cycles, improving code quality, and reducing manual errors.

- Immutable Infrastructure: Fully internalize the "replace, don't repair" philosophy. Understand how this approach contributes to predictable, stable, and secure environments.

- Observability Pillars: Commit the "big three" to memory: logs, metrics, and traces. Be prepared to explain what each data type reveals about the health and behavior of a complex, distributed system and how they enable effective troubleshooting.

Sample Exam-Style Questions

Let's test your understanding with practice questions designed to mimic those found on actual certification exams. Focus not only on the correct answer but also on the reasoning behind it—this is where deep learning occurs.

Question 1: A development team is experiencing prolonged, arduous release cycles, and each deployment of their monolithic e-commerce application is perceived as a high-stakes risk. Which cloud-native principle, when implemented, would most directly alleviate this situation?

- A) Immutable Infrastructure

- B) Service Mesh Implementation

- C) CI/CD Automation

- D) Observability

Correct Answer: C. Continuous Integration and Continuous Delivery (CI/CD) automates the entire software delivery pipeline, encompassing building, testing, and deployment. This automation enables teams to execute small, frequent, and low-risk releases, directly addressing the pain points of long release cycles and high-stakes deployments. Immutable Infrastructure (A) and Service Mesh (B) address other aspects of cloud-native, while Observability (D) focuses on understanding the system after deployment.

Question 2: Your organization is developing a new application with the strategic goal of ensuring it can run consistently on any cloud provider, thereby mitigating vendor lock-in. Which technology is absolutely critical for achieving this level of portability?

- A) Serverless Functions

- B) Containerization with Docker

- C) A specific CI/CD tool like Jenkins

- D) A relational database service

Correct Answer: B. Containerization, exemplified by Docker, is the cornerstone of application portability. It bundles an application with all its dependencies into a single, isolated, and portable unit. This ensures the application behaves identically across different environments, from a developer's laptop to any cloud platform, making it the ideal solution for avoiding vendor lock-in. Serverless Functions (A) are cloud-specific, a CI/CD tool (C) doesn't ensure portability of the application itself, and a relational database service (D) is a component, not the core portability technology.

Recommended Study Path and Resources

A well-structured study plan is essential to prevent feeling overwhelmed. Begin with foundational concepts, then progress to practical, hands-on application.

- Master the Fundamentals: Do not immediately dive into specific tools. Before you get lost in Kubernetes YAML configurations, ensure you have a solid grasp of core cloud-native concepts. Start by learning how to learn cloud computing from a high-level perspective.

- Explore the CNCF Landscape: Familiarize yourself with the Cloud Native Computing Foundation (CNCF) and its extensive portfolio of projects. Prioritize understanding the most prominent ones initially: Kubernetes, Prometheus, and Envoy, as these form the backbone of the ecosystem.

- Choose a Certification Track: Select a specific certification to provide a clear objective for your studies. For operations-focused IT professionals, the Certified Kubernetes Administrator (CKA) is an excellent starting point. For developers, something like the AWS Certified Developer - Associate or Azure Developer Associate would be highly beneficial.

- Get Hands-On: Theory alone is insufficient for passing these exams. Utilize online lab environments (e.g., Killer Shell for Kubernetes, A Cloud Guru labs) or set up a local development environment (e.g., Minikube, Docker Desktop) to gain practical experience. Actually deploying, managing, and troubleshooting containerized applications is the only way to build the muscle memory required for performance-based exams and real-world scenarios.

Frequently Asked Questions

As you delve deeper into cloud-native concepts, certain common questions frequently arise. Let's address a few of the most important ones to clarify any lingering confusion and help you connect theory to practical application.

What’s the Difference Between Cloud-Native and Cloud-Hosted?

This is arguably the most frequent point of confusion, but a clear analogy can simplify it.

Imagine a cloud-hosted application as a tourist living out of a suitcase in a hotel. They've relocated their life to a new city (the cloud), but they haven't genuinely adapted. They're still using their existing belongings and routines, just in a different geographical location. The application was simply "lifted and shifted" onto cloud servers without undergoing any fundamental redesign or optimization for the cloud environment.

A cloud-native application, conversely, is like a local who meticulously designed and built their house from the ground up specifically for that city. The house is engineered to perfectly suit the local climate, culture, and lifestyle, leveraging all the unique advantages the environment offers. These applications are architected from day one to thrive in the cloud, exploiting its inherent elasticity, resilience, and distributed nature.

Is Serverless Computing Always Cloud-Native?

Not always, but serverless computing and cloud-native principles are inherently complementary. Consider cloud-native as the overarching philosophy—the "why" and "how" of constructing modern applications. Serverless computing, on the other hand, is a specific architectural style that aligns almost perfectly with this philosophy.

Most serverless applications (e.g., AWS Lambda, Azure Functions) are intrinsically cloud-native because they are designed to be event-driven, stateless, and instantly scalable. They would be impossible to implement without the unique, on-demand capabilities of the cloud. While you can certainly build a cloud-native application without employing a single serverless function, it's rare to encounter a serverless application that would not be classified as cloud-native.

Key Distinction: The core idea is that cloud-native represents the philosophy and architectural approach (how you build systems), while serverless is a specific implementation model that aligns exceptionally well with that philosophy, especially regarding operational efficiency and automatic scaling.

How Can Small Businesses Adopt These Principles?

Adopting cloud-native principles doesn't require a massive team or a Silicon Valley budget. The key is to think incrementally and focus on achieving tangible, quick wins.

- Containerize one service: Begin by selecting a single, manageable service within your application and learn how to package it using Docker. This provides a fantastic hands-on first step and builds essential skills.

- Automate a single pipeline: You don't need to automate every process simultaneously. Establish a basic CI/CD pipeline for just that one containerized application. This introduces your team to the power of automation.

- Leverage managed services: Avoid the complexity of building and maintaining your own Kubernetes cluster from scratch. Utilize the managed Kubernetes offerings from your chosen cloud provider (e.g., Google Kubernetes Engine (GKE), Amazon Elastic Kubernetes Service (EKS), or Azure Kubernetes Service (AKS)) to offload infrastructure management. This allows your team to focus on application development rather than operational overhead.

Ready to master these crucial concepts and excel in your certification exams? MindMesh Academy provides expertly curated study materials, practical insights, and proven learning techniques designed to ensure your success in the evolving landscape of IT. Prepare for your future and elevate your skills at AZ-305 Azure Solutions Architect Practice Exams.

Written by

Alvin Varughese

Founder, MindMesh Academy

Alvin Varughese is the founder of MindMesh Academy and holds 15 professional certifications including AWS Solutions Architect Professional, Azure DevOps Engineer Expert, and ITIL 4. He's held senior engineering and architecture roles at Humana (Fortune 50) and GE Appliances. He built MindMesh Academy to share the study methods and first-principles approach that helped him pass each exam.