Build Your Data Warehouse on Azure a Modern Guide

At its core, a data warehouse on Azure is your organization's single source of truth for all business intelligence and analytical needs, expertly built on Microsoft’s robust cloud platform. It's far more sophisticated than a conventional database; envision it as a highly specialized system meticulously engineered to ingest, cleanse, integrate, and organize information from every facet of your enterprise—sales, marketing, finance, operations, customer support, and beyond. The ultimate goal is to consolidate this disparate data into a coherent, reliable repository, perfectly optimized for reporting, deep historical analysis, and driving proactive business strategies.

Why Mastering Azure Data Warehousing Is a Career Superpower

Let's illustrate with an analogy that many IT professionals can relate to. Imagine your company's operational data is like an immense collection of vital documents, but they're scattered across thousands of disconnected, often chaotic, physical filing cabinets. Financial reports are in one office, customer interaction logs in another, and website analytics are buried in a dusty server room. Attempting to derive a comprehensive answer to a strategic business question—like "What impact did our Q3 marketing spend have on customer lifetime value in specific regions?"—would be a monumental, if not impossible, undertaking.

An Azure data warehouse is the digital equivalent of constructing a perfectly organized, highly efficient central library for all that crucial business knowledge. It doesn't merely store information; it systematically ingests every relevant piece, intelligently processes and categorizes it, and then makes it astonishingly simple to retrieve and analyze. This transformative process is precisely what converts raw, fragmented data into a powerful engine for advanced business intelligence and informed decision-making.

Transforming Data Silos into Actionable Clarity

A persistent challenge in many organizations is the prevalence of data silos. The marketing team operates on its campaign metrics, the sales team relies on its own performance figures, and these datasets rarely intersect or reconcile. This fragmentation invariably leads to inconsistent reporting, conflicting insights, and, most critically, significant missed opportunities for strategic advantage.

A data warehouse constructed on Azure fundamentally breaks down these barriers. By aggregating and unifying data from every conceivable source, it provides a holistic, 360-degree perspective of the business. Suddenly, IT professionals can enable stakeholders to connect previously isolated data points—revealing, for instance, the direct correlation between a specific marketing initiative and sales conversions in a particular market segment, or how improvements in customer service directly influence long-term customer retention.

By unifying disparate data sources, an Azure data warehouse empowers organizations to transition from reactive troubleshooting to proactive, data-driven strategy. It’s the critical difference between speculating about customer needs and precisely understanding them through empirical evidence.

This profound shift is a primary driver behind the explosive growth in the cloud data warehouse market. Valued at a substantial $24.8 billion in 2023, this market is projected to skyrocket to a staggering $106.2 billion by 2033. With over 85% of Fortune 500 companies already leveraging Azure, a deep understanding of its data services is no longer merely advantageous for IT professionals—it's an absolute prerequisite for career advancement. For those interested in the specifics, a detailed cloud data warehouse market analysis provides further context.

Key Strategic Advantages of an Azure Data Warehouse

Opting to build your data warehouse on Azure confers several critical advantages, beneficial not only for organizational success but also for your personal career trajectory as an IT professional.

Here's a concise overview of what makes the Azure platform particularly compelling for data warehousing:

| Benefit | What It Means For You (and Your Organization) |

|---|---|

| Scalability | You can architect solutions that effortlessly handle petabytes of data during peak operational hours and seamlessly scale back resources during quieter periods, optimizing costs. No complex hardware provisioning; it’s all on-demand elasticity. |

| Cost-Effectiveness | Eliminates the need for massive upfront capital expenditures on physical servers and infrastructure. Azure's pay-as-you-go model makes powerful, enterprise-grade analytics accessible and affordable for businesses of virtually any size. |

| Integrated Ecosystem | Azure offers a comprehensive, tightly integrated suite of services—from data ingestion and persistent storage to advanced analytics and AI/ML capabilities. These tools are designed for seamless interoperability, dramatically simplifying development and operational workflows. |

These aren't just theoretical benefits; they represent real-world operational power and financial flexibility that were historically out of reach for many organizations. For IT professionals pursuing certifications like the DP-700: Microsoft Fabric Data Engineer Associate, a robust understanding of these foundational benefits is absolutely essential. To delve deeper into the specific domains covered by this pivotal exam, be sure to consult our comprehensive Microsoft Fabric Data Engineer Associate study guide at MindMesh Academy. Mastering these core concepts is your pathway to delivering tangible value and becoming the highly sought-after data expert in today's market.

Reflection Prompt: Consider a recent project where data silos hindered progress. How might the scalability or integrated ecosystem of an Azure data warehouse have positively impacted that situation?

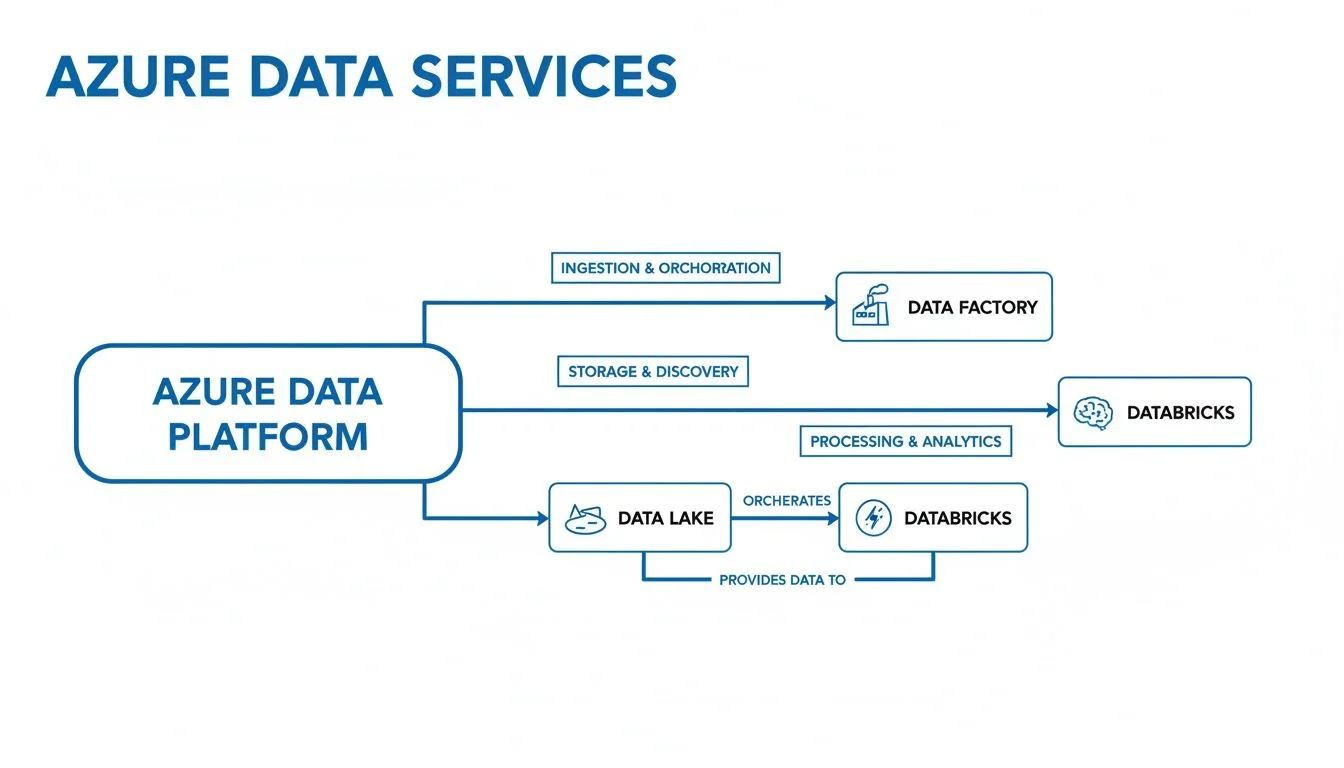

Understanding the Core Azure Data Services

When approaching the construction of a modern data warehouse on Azure, it's most effective to conceptualize it not as a monolithic product, but as a carefully selected team of highly specialized experts. Just as you wouldn't ask a network engineer to optimize a database, each Azure data service has a distinct, critical role it performs exceptionally well.

The true genius lies in understanding how to orchestrate these services to work in concert. It's not about selecting a single "best" tool, but about strategically combining the right specialists to forge a truly high-performance, resilient data solution. Let's get acquainted with the key players on your Azure data engineering team.

Azure Synapse Analytics: The Unified Command Center

Consider Azure Synapse Analytics as the central nervous system or "brain" of your data warehousing operation. It represents a paradigm shift, unifying the traditionally separate worlds of data warehousing and big data analytics into a single, cohesive environment.

On one hand, Synapse excels at running complex SQL queries against massive, structured datasets, serving as the high-performance engine for traditional business intelligence and reporting—the classic data warehouse workload. Simultaneously, it possesses the unique capability to wrangle, process, and query colossal volumes of unstructured data (e.g., logs, IoT data), a task that historically demanded entirely different toolsets like Spark clusters.

This integrated approach is revolutionary for data professionals. With the global cloud data warehouse market projected to surge from $36.31 billion in 2025 to an astounding $155.66 billion by 2034, Synapse's ability to seamlessly incorporate AI and machine learning workloads makes it an indispensable skill for any modern data engineer or architect. For those aiming for the DP-203 certification, understanding Synapse's unified nature and its various components (SQL pools, Spark pools) is paramount.

Azure Data Factory: The Automated Logistics Network

Given the powerful analytics capabilities of Synapse, the next logical question for an IT professional is: "How does all that data actually get there, reliably and efficiently?" This is where Azure Data Factory (ADF) steps in, serving as the sophisticated, automated logistics network responsible for the entire data movement and preparation process.

Raw data is inherently chaotic—it's scattered across countless operational databases, SaaS applications, log files, and external sources. ADF's mission is to orchestrate the complete data integration lifecycle, typically following ETL (Extract, Transform, Load) or ELT (Extract, Load, Transform) paradigms. It provides a visual, low-code interface designed for data engineers to:

- Extract: Connect to hundreds of diverse data sources (on-premises SQL Server, cloud databases, APIs, file shares) to pull in raw data.

- Transform: Cleanse, reshape, enrich, aggregate, and merge that data to align with precise business rules and analytical schemas. This might involve data type conversions, joining tables, or anonymizing sensitive information.

- Load: Deliver the newly transformed, analysis-ready data to its designated destination, whether that's a dedicated SQL pool in Synapse Analytics, a Data Lake for further processing, or another storage service.

In essence, ADF automates the heavy lifting of data pipelines, ensuring a scheduled, resilient, and auditable flow of information into your data warehouse ecosystem. For the DP-203 exam, deep knowledge of ADF's activities, pipelines, linked services, and integration runtimes is critical.

To better grasp how these core services, along with others we'll explore, fit into a cohesive architecture, it’s helpful to see their specialized roles side-by-side. Recognizing the optimal tool for each task is a fundamental skill for any Azure data professional.

Azure Data Services Role Comparison: The Specialist Team

| Azure Service | Primary Role (Analogy) | Best Used For (Certification Context) |

|---|---|---|

| Azure Synapse Analytics | The Unified Command Center | Enterprise-scale analytics, BI reporting, high-performance SQL queries, and big data processing (Spark) at massive scale. Critical for DP-203 scenarios. |

| Azure Data Factory | The Automated Logistics Network | Orchestrating data movement, transformation (ETL/ELT), and pipeline automation from diverse sources to targets. Core for data ingestion patterns on DP-203. |

| Azure Databricks | The R&D Workshop / Advanced Analytics Engine | Collaborative data science, large-scale Spark-based data processing, complex transformations, and machine learning model development. Key for advanced analytics and ML on DP-203. |

| Azure Data Lake Storage | The Central Reservoir / Raw Data Repository | Cost-effective storage for vast amounts of raw, semi-structured, and unstructured data of any type, forming the foundation of modern data platforms. Essential for DP-900 (Azure Data Fundamentals) and DP-203. |

| Azure SQL Database | The Specialist Transactional Database | Powering Online Transaction Processing (OLTP) applications. Often serves as an operational data source or a smaller, specialized relational data store within the broader ecosystem. |

This table offers a quick, high-level overview, but let's dive into some of the more specialized roles.

Azure Databricks: The High-Performance Analytics Workshop

There are times when standard business reporting isn't sufficient. You need to engage in serious data science, develop sophisticated predictive models, or execute complex, code-intensive data transformations that demand the full power of distributed computing. For these advanced scenarios, you turn to Azure Databricks.

Built on the powerhouse open-source engine Apache Spark, Azure Databricks provides a collaborative, optimized platform where data scientists and engineers can tackle the most demanding analytics challenges. It's the premier environment for large-scale data processing, advanced analytics, and machine learning development.

It's crucial to understand that Databricks and Synapse are not competing services; they are powerful partners. A very common architectural pattern involves using Databricks for complex data cleansing, feature engineering, and advanced analytical modeling on raw data. The highly refined and aggregated results are then loaded into Synapse Analytics' SQL pools for broad consumption by business analysts and reporting tools like Power BI. This collaborative model is a cornerstone of modern data engineering.

Azure Data Lake Storage: The Vast Data Reservoir

So, where does all this data—raw, semi-processed, fully refined—actually reside throughout its lifecycle? For that foundational storage, we have Azure Data Lake Storage (ADLS). The most apt way to visualize ADLS Gen2 is as a nearly infinite, highly scalable, and exceptionally cost-effective reservoir capable of holding virtually any kind of data in its original, native format.

Whether you're dealing with structured tabular data from a relational database, semi-structured JSON or XML from an API, or entirely unstructured data like images, audio files, or video streams, ADLS can store it all securely and economically.

This "store everything first" philosophy provides immense flexibility. You can land all your raw data directly into the data lake without the rigid upfront schema definitions characteristic of traditional databases. Then, powerful compute services like Azure Synapse or Azure Databricks can process and analyze this data later, extracting value as needed. For IT professionals studying for fundamental certifications like the DP-900: Microsoft Azure Data Fundamentals, a solid grasp of data lake concepts and their role in a modern data platform is absolutely foundational. Our Azure Data Fundamentals (DP-900) study guide covers these core storage concepts in extensive detail.

Reflection Prompt: Imagine you're designing a data platform. Which specific scenarios would lead you to prioritize Azure Databricks over Synapse, or vice-versa, for the initial data transformation phase?

Choosing Your Azure Data Architecture Blueprint

Selecting the appropriate architecture for your data warehouse on Azure is rarely a one-size-fits-all decision. It's akin to an architect drafting blueprints for a building; the design for a local community library differs vastly from that of a sprawling international airport. The architecture must meticulously align with the intended purpose, operational scale, and analytical objectives.

Azure provides several well-established architectural patterns, each tailored for distinct business goals, data volumes, velocity requirements, and performance expectations. There isn't a singular "best" way to construct your solution. The optimal choice hinges on your specific use cases, whether that involves powering real-time executive dashboards, supporting advanced AI research, or empowering decentralized business units to manage their own data domains.

For IT professionals and aspiring architects, gaining proficiency in these core blueprints is the essential first step toward designing data platforms that are both robust, scalable, and cost-efficient.

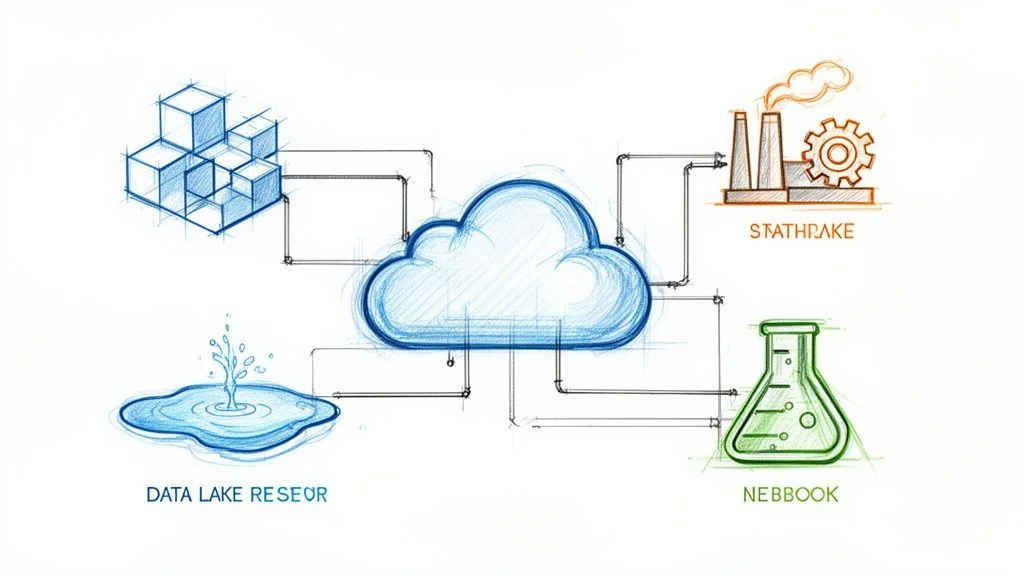

The diagram below visually represents how data typically flows through a contemporary Azure data platform, illustrating the interplay between key services as they collaboratively transform raw information into actionable business insights.

As depicted, the process follows a logical progression. Data is ingested and orchestrated by Azure Data Factory, securely stored in a flexible Azure Data Lake Storage account, and then processed for various analytical requirements using powerful compute engines like Azure Synapse Analytics or Azure Databricks.

The Modern Data Warehouse Pattern

The Modern Data Warehouse (MDW) pattern is arguably the most prevalent and foundational blueprint you'll encounter. For most organizations embarking on enterprise-scale analytics, this is the recommended starting point. It establishes a solid, scalable, and highly performant foundation ideal for classic business intelligence, reporting, and historical analysis.

The typical data flow within an MDW pattern is well-defined and proven:

- Ingestion (ETL/ELT): Azure Data Factory acts as the primary orchestrator, connecting to a multitude of source systems—CRMs, ERPs, transactional databases, SaaS applications—and efficiently pulling in raw data.

- Raw Data Storage: All ingested raw data is landed in Azure Data Lake Storage (ADLS) Gen2. This serves as the cost-effective, massive reservoir for holding diverse data types in their original format, enabling schema-on-read flexibility.

- Data Preparation and Transformation: From the data lake, Data Factory pipelines, Synapse Spark pools, or Databricks notebooks are employed to cleanse, shape, enrich, and structure the data. This crucial step transforms raw data into a format optimized for analytical queries.

- Analytical Serving Layer: The refined, curated data is then loaded into dedicated SQL pools within Azure Synapse Analytics. This high-performance engine is specifically optimized for lightning-fast analytical queries, supporting complex aggregations and joins.

- Visualization and Reporting: Finally, business analysts and end-users connect their visualization tools, most commonly Power BI, directly to Synapse to construct interactive reports, dashboards, and perform ad-hoc analysis.

This architecture excels at handling large volumes of structured and semi-structured data for traditional reporting needs. If you're pursuing a certification like AZ-305: Designing Microsoft Azure Infrastructure Solutions, understanding this pattern is fundamental to designing robust data solutions. You can explore these kinds of design principles in greater depth in our AZ-305 study guide.

The Real-Time Lambda Architecture

What if your business demands insights in real-time, where yesterday's data is already outdated? For use cases such as real-time fraud detection, live streaming analytics, or immediate IoT data processing, insights that are hours old are simply insufficient. This is precisely where the Lambda architecture provides a powerful solution. It's an ingenious pattern that skillfully combines both batch processing for historical accuracy and real-time stream processing for immediate insights, offering a comprehensive view.

The core principle of the Lambda architecture is to process data through two parallel, complementary streams. One stream is dedicated to creating a complete, historically accurate record via batch processing, while the other delivers immediate insights from live, incoming data. Queries then intelligently merge results from both streams to present a unified and current picture.

This dual-path approach operates as follows:

- The Batch Layer (Cold Path): This component is essentially the MDW pattern we just discussed. Data is collected, processed, and refined in large, scheduled batches to construct a comprehensive, historically accurate view of the business state. This layer serves as the ultimate source of truth.

- The Speed Layer (Hot Path): This path processes data the moment it arrives. Services like Azure Stream Analytics, Azure Event Hubs, or Azure Functions are employed to analyze data streams on the fly, generating immediate results for real-time dashboards, alerts, and operational monitoring.

When a user initiates a query, the system intelligently federates and blends data from both the historical batch layer and the live speed layer, presenting a unified, low-latency, and fully current view of the data.

The Decentralized Data Mesh

For extremely large, globally distributed enterprises with diverse data needs, a single, centralized data warehouse can eventually evolve into a bottleneck, hindering agility and innovation. The Data Mesh architecture offers a radical departure from traditional centralized models. It champions a decentralized paradigm where data is treated as a product, owned and managed by the business domains that understand it best.

In a data mesh, individual business domains—such as Marketing, Supply Chain, Customer Service, or Finance—are made responsible for owning, developing, and serving their own "data products." While they operate within a common set of shared platform tools and governance principles, they maintain full autonomy over their data's quality, discoverability, accessibility, and lifecycle.

This model fosters a culture of data ownership and accountability. It empowers domain teams to operate with significantly greater speed and autonomy, as they are no longer dependent on a central data team to fulfill every data request. The shift is from a monolithic data warehouse to an interconnected network of domain-owned, interoperable data services. Understanding this organizational and technical shift is increasingly vital for senior data professionals.

Reflection Prompt: If you were designing a data platform for a global e-commerce company, which architectural pattern—MDW, Lambda, or Data Mesh—would you initially lean towards, and why? What are the key considerations that would influence your final decision?

Optimizing Performance and Managing Costs

So, you've successfully deployed your data warehouse on Azure. This is a significant accomplishment. However, the true mark of expertise—the aspect that distinguishes a good setup from an exceptional one—lies in ensuring it operates flawlessly, with peak performance, all while meticulously controlling expenses. Any professional can provision cloud resources, but a skilled data engineer knows how to tune the engine for maximum velocity while vigilantly monitoring the fuel gauge.

Achieving this balance isn't merely theoretical; it demands practical, informed decisions day in and day out. It means comprehending the trade-offs between various service tiers, leveraging advanced techniques to accelerate query performance, and implementing a robust strategy to keep your monthly Azure consumption in check. This is where operational excellence truly comes into play.

Choosing Your Synapse SQL Pool Engine Wisely

One of the foundational decisions within Azure Synapse Analytics is selecting the appropriate SQL pool engine. You essentially have two primary options: Dedicated or Serverless. Consider it this way: are you opting for a private, reserved supercomputer for consistent, heavy workloads, or are you utilizing a high-speed, on-demand query service where you only pay for what you use?

-

Dedicated SQL Pools: This is your reserved supercomputer. You provision and reserve a specific amount of compute power, quantified in Data Warehouse Units (DWUs). This model guarantees consistent, predictable performance, which is indispensable for mission-critical enterprise reports and dashboards that demand unwavering speed and availability. Think of production BI reporting with strict SLAs.

-

Serverless SQL Pools: This is your pay-per-query service. No compute power is pre-provisioned or reserved; you are billed solely for the amount of data processed by each query you execute. It's the perfect solution for ad-hoc analysis, exploratory data discovery, data lake querying, or unpredictable workloads where paying for idle compute resources would be inefficient.

Fortunately, you are not permanently locked into a single choice. A common and highly effective pattern is to begin with Serverless SQL pools for initial data exploration, development, and ad-hoc analysis. Once specific production reports or analytical patterns stabilize and demonstrate consistent performance needs, you can transition those critical workloads to a Dedicated SQL pool for guaranteed speed and optimized cost performance at scale. This flexibility is a key aspect of Azure's appeal.

Advanced Performance Tuning Techniques

Once your data warehouse is operational, the focus shifts to continuous optimization. Slow-running queries are a primary performance bottleneck, but the good news is that most can be effectively remediated with the right tuning techniques.

One of the most potent tools in your data warehousing toolkit is the materialized view. A standard view merely stores the query definition and executes it every time it's accessed. A materialized view, however, pre-computes the results of a complex query and physically stores them as if they were a regular table. When you query a materialized view, you're retrieving already-calculated data, leading to dramatically faster response times.

Materialized views are a transformative capability for executive dashboards and high-traffic reporting. Imagine a dashboard populated with complex charts and Key Performance Indicators (KPIs). Instead of forcing the underlying system to re-run heavy aggregations every time the page loads, you can pre-calculate all these metrics into a materialized view. The outcome? Sub-second data retrieval and a superior user experience.

Another critical strategy, particularly within Synapse Dedicated SQL Pools, is workload management. This feature allows you to act as a traffic controller for your queries. You can define rules to assign higher priority and allocate more compute resources to critical reports (e.g., for the CEO or monthly financial close), while ensuring that less urgent or exploratory queries do not monopolize system resources. This is essential for preventing resource contention and reliably meeting your service level agreements (SLAs).

Actionable Cost Management Strategies

Cloud computing costs have a tendency to escalate discreetly if not actively monitored and managed. The key is to adopt a proactive, rather than reactive, approach. Fortunately, Azure provides a comprehensive suite of tools and strategies to maintain stringent control over your spending.

- Model Your Costs Pre-Deployment: Before provisioning any resources, diligently use the Azure Pricing Calculator to create detailed cost forecasts. This provides a realistic baseline for your expected monthly expenditure.

- Establish Budget Alerts: Avoid unwelcome surprises at month-end. Within the Azure portal, configure budgets for your subscriptions or specific resource groups. Set up automated alerts to notify you when you reach 50%, 75%, and 90% of your allocated budget.

- Leverage Reservations for Consistent Workloads: If you have predictable, long-running workloads—such as a Dedicated SQL Pool for core business reporting—investigate Azure Reservations. By committing to a one- or three-year term, you can secure substantial discounts, frequently up to 65% off the standard pay-as-you-go rates.

- Implement Smart Pausing and Scaling: This is often the quickest win for cost reduction. If your Dedicated SQL Pool is not actively processing data overnight, on weekends, or during off-peak hours, pause it! You immediately cease paying for compute resources the moment it's paused. Similarly, develop routines to scale your resources up during periods of peak demand and scale them back down during quieter times. This dynamic management prevents over-provisioning.

Reflection Prompt: Given a scenario where a critical daily report consistently runs slowly and is impacting business decisions, but ad-hoc queries are frequent yet unpredictable, how would you apply the concepts of SQL Pool choice, materialized views, and workload management to optimize both performance and cost?

Implementing Essential Data Warehouse Security

In the realm of big data, security is not merely a compliance checkbox; it is the absolute bedrock upon which everything else is built. Constructing a powerful data warehouse on Azure is an impressive technical feat, but it represents only half the challenge. If your invaluable data is not meticulously protected with multiple, robust layers of defense, you're not just risking regulatory penalties or reputational damage; you're potentially jeopardizing the entire operational integrity and trust of your business.

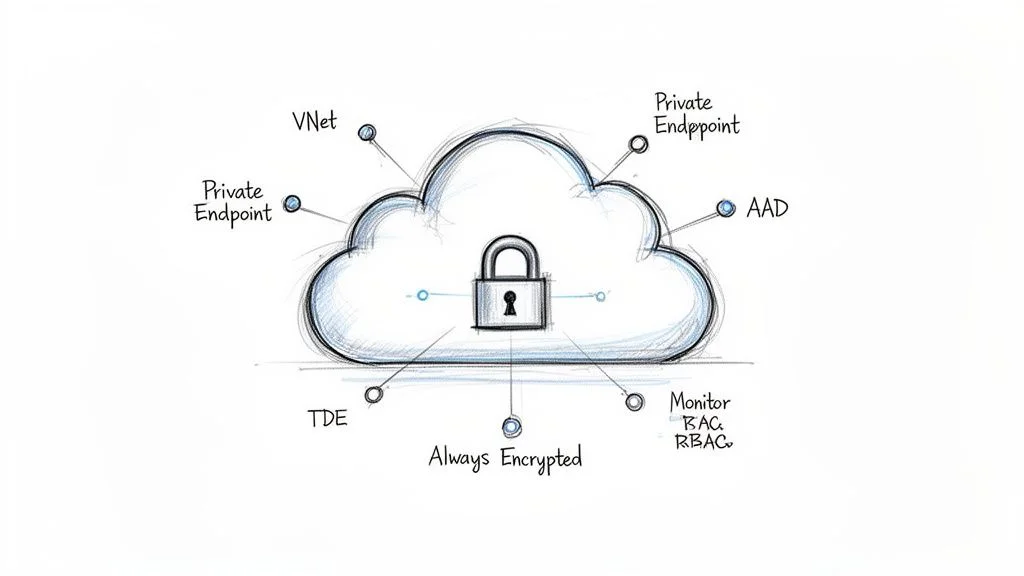

A comprehensive security strategy demands protection from every conceivable angle. This encompasses controlling network access, meticulously managing user identities and permissions, and ensuring the data itself remains unreadable to unauthorized parties, whether it's stored at rest or actively being processed.

Isolating Your Network Perimeter

Your primary line of defense involves constructing a virtual fortress around your critical data services. The most significant external threat typically originates from the public internet, making the objective to severely restrict or entirely eliminate direct public exposure. Azure provides sophisticated networking tools to achieve precisely this.

-

Azure Virtual Networks (VNet): Conceptualize an Azure VNet as your own private, logically isolated section of the Azure cloud. By deploying your Azure Synapse workspace, Data Lake Storage, and other critical data services within a VNet, you effectively remove them from direct exposure to the public internet. This creates a secure, private network boundary.

-

Private Endpoints: These act as secure, private "gateways" into your Azure services. A Private Endpoint assigns a private IP address from your VNet to a specific Azure service (e.g., your Synapse workspace, Data Lake account). All network traffic directed to and from your data warehouse then traverses Microsoft's highly secure backbone network, never touching the public internet.

When VNets and Private Endpoints are deployed in combination, they establish an incredibly robust and effective security barrier. This configuration ensures that only authorized resources within your own controlled and secured network can even attempt to establish a connection to your data warehouse, rendering it effectively invisible to the external world.

Enforcing Identity and Access Control

With your network perimeter fortified, the subsequent security layer focuses on granularly managing who can access your data warehouse and precisely what operations they are authorized to perform. The unwavering principle here is the principle of least privilege. In essence, every user, service principal, or application should be granted the absolute minimum set of permissions necessary to fulfill its assigned function, and nothing more.

Security is not a one-time configuration; it is an ongoing, vigilant process. Your identity and access policies are dynamic documents that must continuously evolve with your team structure, data assets, and compliance requirements, ensuring that access rights consistently remain appropriate and minimal.

Azure Active Directory (AAD) (now Microsoft Entra ID) should serve as your singular, authoritative source for managing all user identities and access. Eschew the use of legacy local SQL accounts wherever possible. Instead, integrate all authentication and authorization with AAD. This integration enables the enforcement of robust authentication policies such as Multi-Factor Authentication (MFA), a straightforward yet exceptionally powerful measure that can thwart the vast majority of identity-based attacks.

To protect your valuable data warehouse assets, it's vital to implement robust Data Security Best Practices. From there, you can meticulously define permissions using Role-Based Access Control (RBAC). RBAC allows for highly granular control. For instance, a data analyst might be granted only read-only access to specific tables or views within Synapse, while a data engineer receives permissions to design, build, and modify data ingestion pipelines in Azure Data Factory. This precise control prevents unauthorized data exposure or manipulation.

Protecting Data at Rest and in Use

Even with impeccably configured network and identity controls, a responsible security posture mandates operating under the assumption that a breach, however unlikely, remains a possibility. This is where data encryption becomes your ultimate line of defense, rendering the raw data itself completely unintelligible and useless to an unauthorized attacker.

-

Transparent Data Encryption (TDE): This is a fundamental, industry-standard security feature that encrypts your entire database while it is "at rest" (i.e., stored on disk). TDE is typically enabled by default in Azure Synapse Analytics and automatically protects your underlying data files, transaction log files, and backups, all without requiring any modifications to your application code. It ensures that if storage media were physically compromised, the data would remain encrypted.

-

Always Encrypted: For your most sensitive data—think personally identifiable information (PII) like social security numbers, credit card details, or medical records—TDE alone may not be sufficient. Always Encrypted elevates data protection by keeping data encrypted even while it is being actively queried and processed. The data remains encrypted all the way from the client application to the database engine and back. This means that even a database administrator with full system access rights cannot view the raw, unencrypted information, significantly reducing insider threat vectors.

This layered, defense-in-depth approach is increasingly critical as enterprises adopt cloud-native solutions. The data warehouse as a service (DWaaS) segment on Azure is a major focus for certification exams like DP-203, projected to command 42.30% of revenue in 2025. As this market segment expands to an estimated $43.16 billion by 2035, mastering these advanced security controls becomes a paramount skill for any Azure professional aiming for a leadership role in data platforms. For further insights into Azure's dominance and these trends, you can read more about Azure's market share.

Reflection Prompt: Your organization is preparing for a compliance audit that requires strict adherence to data privacy regulations. How would you leverage a combination of VNets, Private Endpoints, AAD with MFA, RBAC, and Always Encrypted to demonstrate a robust security posture for your Azure data warehouse?

Your Path to Azure Data Certification and Beyond

Grasping the intricate architecture and diverse services required for an effective Azure data warehouse is one thing. Proving your practical ability to design, build, and manage such a system is another entirely. This is precisely where professional certifications provide a tangible, industry-recognized validation of your skills and expertise to potential employers.

For any IT professional serious about forging a successful data career on Azure, the DP-203: Data Engineering on Microsoft Azure certification is widely regarded as the gold standard. Every concept we've thoroughly explored in this guide—from the integrated power of Azure Synapse Analytics and the orchestration capabilities of Azure Data Factory to advanced security models and granular performance tuning strategies—is not merely informative; it forms the core curriculum upon which the DP-203 exam is constructed.

Tying What You've Learned to the DP-203 Exam

Think of this comprehensive guide as your real-world blueprint for data engineering, which conveniently aligns perfectly with the DP-203 certification exam objectives. On the exam, you won't encounter abstract theoretical questions; instead, you'll face practical, scenario-based challenges designed to test your ability to apply knowledge.

- Choosing the Right Tools: You'll be presented with a specific business problem and expected to determine whether Azure Synapse Analytics, Azure Databricks, or Azure Data Lake Storage (or a combination) is the most appropriate and efficient solution.

- Designing the Solution: You'll need to confidently identify when a Modern Data Warehouse pattern is best suited for traditional BI versus, for example, a Lambda architecture for processing streaming data.

- Balancing Performance and Cost: Expect questions that assess your understanding of when to employ a Serverless SQL pool for flexible ad-hoc queries versus a Dedicated pool for predictable, high-performance production workloads.

- Locking Down Data: You'll be tested on your ability to properly secure a data warehouse environment using Azure Virtual Networks, Private Endpoints, and granular Role-Based Access Control (RBAC).

When you master these critical topics, you are not simply memorizing facts for a test. You are actively building a robust mental toolkit for solving complex, real-world data puzzles. This training enables you to ask the right questions and instinctively know which Azure service is the most effective tool for any given job—a skill that is invaluable across virtually all data-centric roles.

A certification represents more than just a credential. The rigorous process of studying for it compels you to develop a structured approach to problem-solving. It trains you to think critically, analyze requirements, and confidently select the optimal Azure services to build scalable, secure, and cost-effective data solutions—a skill highly valued by employers.

Sample DP-203 Exam Question

Let's apply these concepts with a question similar to what you might encounter on the DP-203 exam.

Scenario: Your company requires a new analytics platform to process historical sales data from an on-premises SQL Server database and simultaneously analyze real-time customer clickstream data from its high-traffic website. The ultimate goal is to provide a unified analytics environment where business analysts can generate historical reports and view live, low-latency dashboards. A key non-functional requirement is to minimize costs for unpredictable, ad-hoc queries performed by data scientists.

Which combination of Azure services should you recommend to meet these requirements?

- Azure SQL Database and Azure Stream Analytics

- Azure Synapse Analytics (Dedicated and Serverless SQL pools) and Azure Event Hubs

- Azure Databricks and Azure Data Lake Storage

- Azure Data Factory and Azure Blob Storage

Correct Answer and Explanation: The correct answer is 2. Here's a breakdown of why: Azure Synapse Analytics serves as the ideal unified platform for both traditional data warehousing and big data analytics. Its Dedicated SQL pools can handle the predictable performance requirements for historical reporting on the sales data, offering consistent speed. Concurrently, Serverless SQL pools within Synapse are perfectly suited for cost-effectively managing unpredictable, ad-hoc queries from data scientists against the data lake, as you only pay for data scanned. To ingest the high-volume, real-time clickstream data, Azure Event Hubs is purpose-built for scalable streaming ingestion. This combination comprehensively addresses every stated requirement, offering both real-time and historical analysis with optimized cost management.

Looking Past the Exam to Your Thriving Career

Achieving the DP-203 certification is a tremendous milestone, but it should be viewed as a powerful launchpad for your career, not an end goal. The practical, validated skills you've just proven are precisely what organizations globally are actively seeking in today's data-driven landscape.

Examine job descriptions for roles such as Data Engineer, Analytics Engineer, Cloud Architect, or Machine Learning Engineer. You will consistently find proficiency in Azure data services listed as a non-negotiable, core requirement.

By genuinely understanding how to design, build, and effectively manage a sophisticated data warehouse on Azure, you're not just preparing for an exam. You are making a strategic investment in a set of practical, high-demand skills that will empower you to lead impactful projects, deliver measurable business value, and build a fulfilling, resilient career that you can be truly proud of.

Frequently Asked Questions

As you delve deeper into the intricate world of data warehousing on Azure, certain common questions invariably arise. Let's address a few of the most frequently asked, clarifying any potential confusion to enable you to move forward with unwavering confidence.

What Is the Main Difference Between a Data Warehouse and a Data Lake?

This is a fundamental and frequently asked question. Think of a data warehouse as a meticulously organized, highly curated library. Every "book" (your data) has undergone rigorous selection, cleansing, structuring, and placement onto specific shelves according to a predefined schema. It's pristine, reliable, and optimized for immediate access for structured reports and predefined analyses.

A data lake, conversely, is more akin to a vast, sprawling, raw archive. It's designed to accept everything—from polished, structured reports and relational tables to messy, unstructured interview transcripts, audio recordings, video files, and raw IoT sensor data. It acts as an immense repository of untapped potential, storing data in its original format. In a modern data warehouse on Azure architecture, these two concepts are not mutually exclusive; they are complementary. You typically use Azure Data Lake Storage for cost-effective, large-scale storage of raw and semi-processed data, while Azure Synapse Analytics (with its SQL pools) serves as the optimized, polished library layer for high-performance analytical querying and business intelligence.

Should I Use Azure Synapse Analytics or Azure Databricks for My Project?

The "Synapse or Databricks?" debate is a common one, but the truth is, it's rarely a direct competition—it's primarily a partnership. These powerful services are engineered to work synergistically, each leveraging its unique strengths for different stages or types of data workloads.

- Consider Azure Synapse Analytics as your enterprise-grade powerhouse for data warehousing and integrated analytics. It's purpose-built for high-performance SQL analytics, serving curated data to business intelligence tools (like Power BI), and providing a unified environment for both structured and semi-structured data processing. It's the go-to tool for your business analysts and for delivering production-ready data marts.

- Consider Azure Databricks as your advanced, collaborative workshop for data science, machine learning, and complex, code-centric data engineering. This is where data scientists and engineers thrive, working with Python, Scala, or R on heavy-duty data transformations, building sophisticated predictive models, developing AI applications, and performing exploratory big data analytics using Apache Spark.

A highly effective and prevalent architectural pattern involves utilizing Databricks to perform complex, exploratory data cleansing, feature engineering, and advanced analytical modeling on raw data residing in a data lake. The resulting clean, refined, and often aggregated datasets are then efficiently loaded into Synapse Analytics' SQL pools for broad consumption across the business, enabling self-service BI and operational reporting.

The most robust and agile data architectures often skillfully combine the strengths of multiple specialized services. Synapse provides the governance, performance, and unification for enterprise-wide analytics, while Databricks offers the specialized, collaborative environment essential for cutting-edge data science and advanced AI initiatives.

How Can I Start Building a Data Warehouse on Azure with a Small Budget?

You absolutely do not need a massive budget to embark on building a powerful data warehouse on Azure. Thanks to Azure's flexible, consumption-based pricing model and the prevalence of serverless technologies, you can initiate a robust data warehousing solution by adopting smart, cost-effective strategies. This approach allows you to demonstrate tangible value and iteratively scale your investment as your needs and budget grow.

Here’s a practical, cost-effective game plan for getting started:

- Ingest Data Affordably: Leverage Azure Data Factory using its consumption-based pricing model. You only pay for the precise compute time your data pipelines are actively running, which can often be just minutes per day or on a scheduled basis.

- Store Data Economically: Avoid immediately loading all your raw data into expensive, high-performance databases. Instead, land all your raw and semi-processed data into the cool or archive tiers of Azure Data Lake Storage. These tiers offer incredibly cost-effective storage for vast amounts of data, acting as your primary landing zone.

- Analyze On-Demand (Serverless First): This is the true game-changer for budget-conscious initiatives. Utilize Azure Synapse's Serverless SQL Pools for your data querying and analytical needs. With Serverless SQL pools, you are billed for the amount of data processed per query, rather than incurring the fixed costs of running a dedicated compute cluster 24/7. This dramatically reduces costs for unpredictable or ad-hoc workloads.

This "serverless-first" methodology empowers you to get a fully functional and scalable data warehouse up and running on Azure without significant upfront financial commitments, making it an ideal approach for proof-of-concepts, departmental solutions, or startups.

Ready to master these indispensable concepts and validate your expertise? MindMesh Academy provides the focused study materials, comprehensive guides, and realistic practice questions you need to ace your Azure data certifications and accelerate your career. Start your learning journey today!

Written by

Alvin Varughese

Founder, MindMesh Academy

Alvin Varughese is the founder of MindMesh Academy and holds 15 professional certifications including AWS Solutions Architect Professional, Azure DevOps Engineer Expert, and ITIL 4. He's held senior engineering and architecture roles at Humana (Fortune 50) and GE Appliances. He built MindMesh Academy to share the study methods and first-principles approach that helped him pass each exam.