10 AWS Best Practices Security Experts Swear By in 2025

10 AWS Best Practices Security Experts Swear By in 2025

MindMesh Academy presents this guide on mastering cloud security within Amazon Web Services (AWS). For IT professionals, implementing effective security measures is a requirement for operational integrity, data protection, and professional growth. As organizations move critical workloads to the cloud, the demand for certified security expertise continues to rise. This section examines ten actionable AWS best practices security professionals use to protect infrastructure. This knowledge is essential for candidates preparing for the AWS Certified Security – Specialty or the AWS Certified Solutions Architect – Professional exams to pass with confidence.

We provide practical examples and implementation tips to help you build a resilient environment. This resource covers Identity and Access Management (IAM), audit logging with CloudTrail, and advanced network controls within your Virtual Private Cloud (VPC). You will also find details on data encryption at rest and in transit. Learn to use AWS Config for compliance tracking, manage secrets with AWS Secrets Manager, and apply AWS GuardDuty for threat detection. While these points focus on AWS, a strong security posture supports broader IT strategies, similar to how these general cybersecurity tips for businesses work across different environments. Use these tactics to verify that your configurations meet internal standards and external regulatory requirements.

These principles provide a foundation for your cloud security strategy. If you are preparing for a certification exam, securing a large enterprise deployment, or building personal technical skills, these methods will help you maintain high security standards. Use this guide as a hands-on manual for technical implementation across your accounts. Start building your defense using these expert-level AWS security techniques to ensure your infrastructure remains protected against modern threats.

1. Identity and Access Management (IAM) - The Principle of Least Privilege

The Principle of Least Privilege (PoLP) serves as a fundamental standard for effective AWS best practices security and is a core concept tested throughout AWS certification exams. This security rule dictates that any user, application, or service must only hold the minimum permissions required to perform its specific, legitimate tasks. By strictly limiting access rights, you reduce the potential impact or "blast radius" of a security incident. PoLP limits the damage an attacker can cause whether the threat comes from stolen credentials, an internal actor, or a misconfigured service. It ensures that a single misconfiguration does not lead to a total compromise of your cloud environment.

Caption: The Principle of Least Privilege (PoLP) is foundational for securing your AWS environment, ensuring users and services only have the permissions they absolutely need.

Caption: The Principle of Least Privilege (PoLP) is foundational for securing your AWS environment, ensuring users and services only have the permissions they absolutely need.

Why PoLP is a Core Practice for IT Professionals

Implementing a least privilege model changes your security strategy from a permissive "allow-by-default" stance to a restrictive "allow-by-exception" approach. This is vital in cloud environments where identities and automated roles multiply rapidly. Candidates for the AWS Certified Solutions Architect – Associate exam often analyze scenarios where a developer needs to deploy applications to Amazon EC2. Under PoLP, that developer receives permissions to launch instances and deploy code to a specific staging area but is blocked from terminating production instances. This restriction prevents accidental outages and unauthorized changes. Real-world organizations like Netflix use fine-grained IAM roles for individual microservices. This ensures that if one service is compromised, the intruder cannot gain broad access to the rest of the infrastructure. This granular control is a direct application of defense in depth.

Modern security strategies also incorporate implementing a Zero Trust security model, where no user or device is trusted by default. This approach works alongside PoLP by requiring continuous verification for every access request, which strengthens your cloud security.

Actionable Tips for Implementation and Certification Prep

To apply least privilege and prepare for your certification exams, move beyond basic role creation. Use these strategies to manage permissions effectively:

- Test Before You Deploy: Use the IAM Policy Simulator to verify that your policies function as intended before you apply them to production. This helps you avoid creating overly broad permissions or causing operational downtime due to missing access rights. Troubleshooting these policy issues is a frequent task in practical security exams.

- Audit and Refine Continuously: Use AWS Access Analyzer to find and review permissions that are not being used. This tool helps you right-size policies based on actual activity rather than assumptions. Removing unnecessary access is a vital part of security hygiene and is required for maintaining a strong security framework.

- Set Maximum Permission Ceilings with Boundaries: Use permission boundaries on IAM roles when delegating authority to other teams. A permission boundary sets a maximum limit on the permissions an entity can have. Even if a user has the authority to create new IAM policies, they cannot grant themselves or others any permissions that exceed the boundary you established. This is a primary control for delegated administration.

- Justify Every Permission: Keep clear records that explain the business reason for every permission granted. This documentation is necessary for meeting compliance standards such as PCI DSS or HIPAA. It also helps build a culture where security is considered early in the development cycle, aligning with ITIL service management.

Mastering these techniques helps you use IAM as a central security enforcement tool. Understanding these mechanics is essential for anyone pursuing AWS security certifications. For more details on creating complex policies, you can review guides on designing policies for least privilege access.

Reflection Prompt:

Consider an application you've worked with. How would you apply PoLP to its AWS IAM roles and users? What AWS services would you use to test and validate those permissions?

2. Enable Multi-Factor Authentication (MFA) for Root and Privileged Accounts

Multi-Factor Authentication (MFA) serves as a vital security gate, ensuring that a stolen password does not lead to a total account breach. It requires two or more pieces of evidence to verify an identity. This setup moves security beyond a single point of failure. If an attacker obtains a password, they still cannot gain access without the secondary physical or virtual token. Experts consider MFA a mandatory component of AWS best practices security and a primary requirement for modern compliance audits.

Why MFA is Non-Negotiable for IT Professionals

Activating MFA is a requirement for the AWS account root user. This user has total control over all resources, including the ability to close the account or view sensitive billing data. A root account compromise is the most damaging event that can happen in an AWS environment. However, security should not end with the root user. Every privileged IAM identity—including administrators, developers, and security leads—needs MFA protection. This prevents attackers from deleting production databases, altering VPC configurations, or leaking proprietary data.

In the AWS Certified SysOps Administrator – Associate exam, you will likely see scenarios where MFA failure leads to system-wide outages. Industry leaders like GitHub and Stripe require MFA for any staff member touching production code or servers. They understand that credential-based attacks are the most common entry point for hackers. In highly regulated sectors like finance or healthcare, firms often require hardware-based security keys. These devices offer a physical layer of protection that software-based TOTP (Time-based One-Time Password) apps cannot match. Physical keys provide a higher standard of security because they are difficult to clone and require physical possession.

Certification Insight:

AWS exams test your ability to configure MFA for different user types. Expect questions about using IAM policies to enforce MFA and selecting the right MFA type for high-risk accounts. You should know when to use a virtual app versus a physical FIDO key for the root user specifically.

Actionable Tips for Effective MFA Implementation

Setting up MFA is the first step, but managing it effectively requires a strategy. Incorporate these practices to ensure your MFA deployment is resilient and helps rather than hinders your team:

- Prioritize Hardware Keys for Root: For your root account, use a hardware security key such as a YubiKey that supports FIDO2/U2F. Unlike virtual apps on a smartphone, physical keys cannot be easily intercepted by malware or tricked by sophisticated phishing sites. Keep this key in a secure physical location like a fireproof safe to prevent loss.

- Enforce MFA with IAM Policies: You can attach a policy to IAM groups that denies access to all AWS services unless the user has authenticated with MFA. This serves as a fail-safe. If someone forgets to enable MFA or tries to use a stolen access key via the CLI, the policy blocks their request. This MFA-protected API access is a high-standard security control.

Code Block: Example IAM policy snippet to enforce MFA for all actions except MFA-related ones.{ "Version": "2012-10-17", "Statement": [ { "Sid": "DenyAllExceptMFA", "Effect": "Deny", "NotAction": "iam:*MFA", "Resource": "*", "Condition": { "BoolIfExists": { "aws:MultiFactorAuthPresent": "false" } } } ] } - Plan for Recovery: Create a clear, written recovery process before an incident occurs. If you lose your root MFA device, you must go through AWS Support identity verification. This process takes time and requires specific documentation. Treat this recovery manual with the same importance as a disaster recovery or business continuity plan. Having this ready ensures you can restore access quickly.

- Test Replacement Procedures: Periodically run drills for replacing MFA devices. If an admin loses their phone or a hardware key breaks, your team should know how to rotate the device without creating a security hole. Testing these procedures ensures that security does not become a bottleneck during an emergency or when onboarding new staff.

Reflection Prompt:

How would you maintain strict MFA standards across an organization with hundreds of AWS accounts and thousands of users? Consider the logistical challenges of distributing hardware keys versus the security risks of virtual apps.

3. Enable CloudTrail for Comprehensive Audit Logging

AWS CloudTrail is the primary service for governance, compliance, and auditing within your AWS account. It records a detailed history of account activity, logging every API call made through the AWS Management Console, SDKs, command-line tools, and other AWS services. These records are permanent and provide the evidence needed for tracking resource changes or investigating security incidents. This visibility helps you monitor every configuration change and access request across your environment.

Why CloudTrail is Essential for Security and Compliance

Without a complete log of environment activity, you are blind to potential threats and misconfigurations. CloudTrail identifies the identity of the caller, the exact time of the action, the source IP address, and the specific parameters used in the request. This data is mandatory for meeting strict compliance frameworks like PCI DSS, HIPAA, and GDPR, which require detailed audit histories for all cloud actions.

Consider the 2019 Capital One data breach as a practical example. Investigators used CloudTrail logs to trace unauthorized API calls and map the movement of the attacker through the internal network. This level of detail helps security teams determine the exact impact of a breach, understand the blast radius, and close security gaps before they are exploited again. This practice is a key component of an effective AWS security strategy, ensuring that all activities are available for review. These logs serve as a definitive source of truth for both forensics and operational accountability, a focus area in the AWS Certified Security – Specialty exam.

Actionable Tips for Optimal CloudTrail Configuration

To get more value from CloudTrail, go beyond simple activation and integrate these advanced configurations into your security operations:

- Activate on Day One, Across All Regions: Enable CloudTrail in all AWS regions immediately when setting up a new account. Attackers often use neglected regions to hide malicious activity. For multi-account organizations, use an Organization Trail to automatically apply this setting to all existing and future member accounts. This centralizes your log collection and ensures consistent coverage across the entire organization.

- Secure Your Logs in a Dedicated Account: Store CloudTrail logs in a dedicated, restricted S3 bucket in a separate "log archive" AWS account. This account should have read-only access for security teams and strict controls, such as S3 Bucket Policies, Versioning, and MFA Delete, to prevent the modification of logs. This isolation keeps your audit trail safe even if a primary production account is compromised.

- Ensure Log Integrity with Validation: Enable log file validation to create a digitally signed digest file for your logs. This feature provides a cryptographic way to verify that the log files have not been tampered with or altered after AWS delivered them. Forensic investigators require this proof to ensure that the log data is accurate and has not been edited by an intruder.

- Monitor Sensitive Actions with CloudWatch Alarms: Configure CloudWatch Alarms based on critical CloudTrail events to get real-time alerts for high-risk API calls. You should monitor for

StopLoggingorDeleteTrailcalls, which indicate an attempt to disable logging. Other critical events to watch includeAuthorizeSecurityGroupIngresswith a0.0.0.0/0source and IAM changes likeCreateUserorAttachUserPolicy. These alerts enable a fast response to suspicious activity. - Log Data-Level Activity for Granular Insight: For critical resources like S3 buckets containing sensitive data or DynamoDB tables, enable data events in your trail. This logs object-level API operations such as

GetObject,DeleteObject, andPutObject. While this increases log volume, it is the only way to identify data exfiltration attempts or unauthorized access to sensitive information stored in your cloud storage.

Mastering these configurations transforms CloudTrail from a passive logging service into a proactive security monitoring and forensics tool. These skills are highly valued in professional environments and on certification exams. For a more detailed look at the setup process, you can review this guide on configuring service and application logging with CloudTrail and CloudWatch.

Reflection Prompt:

Imagine a security incident where sensitive data was unexpectedly deleted. How would CloudTrail logs help you investigate, and what specific details would you look for?

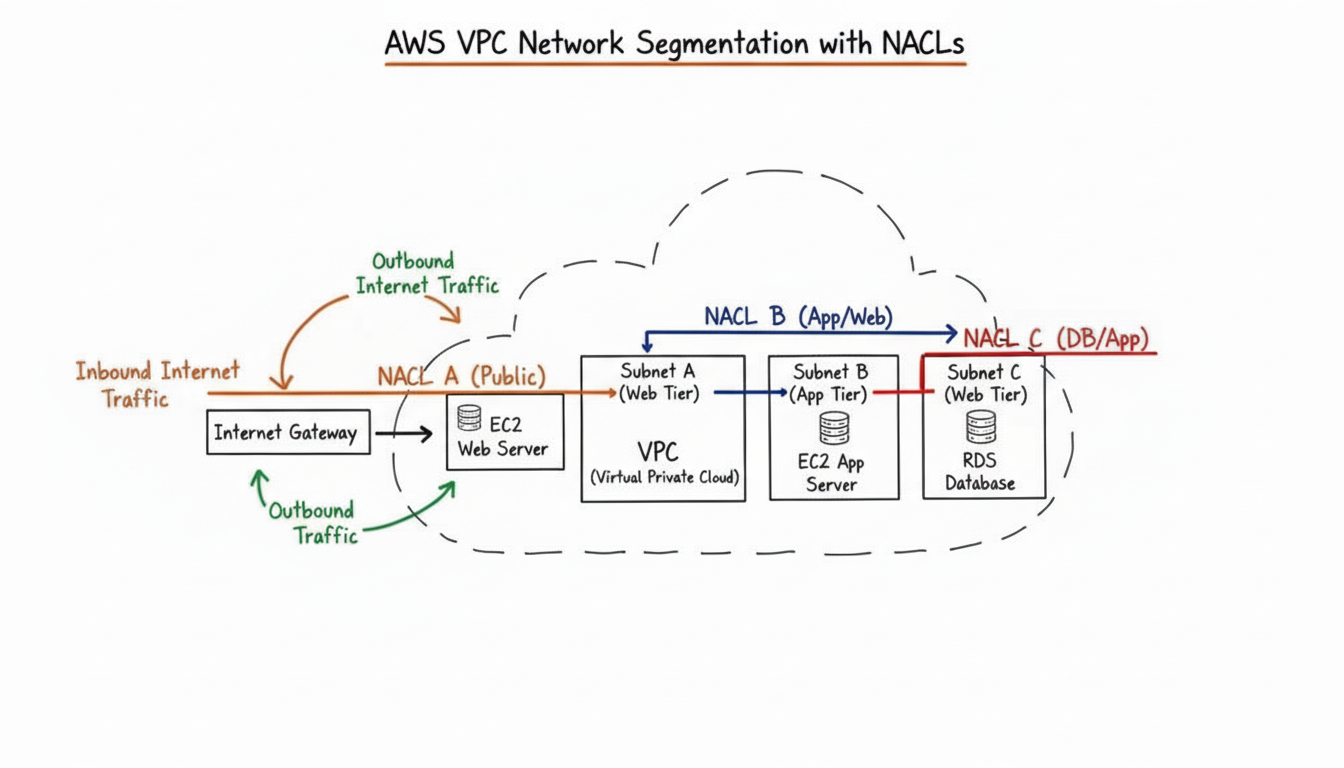

4. Implement VPC Security with Network Access Controls

Managing network traffic is a core requirement of AWS security. Your Virtual Private Cloud (VPC) functions as an isolated network for your cloud resources. Securing this environment requires a layered strategy using stateful Security Groups (SGs) and stateless Network Access Control Lists (NACLs). This approach creates a defense-in-depth model that separates critical resources and strictly controls traffic flowing in and out of your subnets and instances. By filtering traffic at multiple points, you reduce the risk of a single configuration error leading to a major breach.

Caption: AWS VPC security uses layered controls like Security Groups at the instance level and NACLs at the subnet level to filter traffic effectively.

Caption: AWS VPC security uses layered controls like Security Groups at the instance level and NACLs at the subnet level to filter traffic effectively.

Why VPC Security is Crucial for Cloud Architects

Network segmentation is essential for protecting sensitive data and meeting compliance standards like HIPAA or PCI DSS. For example, a healthcare firm can store its databases in private subnets. By applying strict NACL rules to those subnets, the firm can block all direct internet access while allowing only necessary internal traffic from the application tier. Similarly, e-commerce platforms often use VPC Endpoints to connect application servers to services like Amazon DynamoDB or Amazon S3. This keeps the traffic within the Amazon network, preventing it from crossing the public internet and shrinking the attack surface. These architectural patterns are central themes in the AWS Certified Solutions Architect – Professional exam.

Treating the network as a primary security control allows you to enforce traffic policies that match your specific application needs. Strong VPC security prevents an attacker from moving through your network if one instance is compromised. It ensures that your internal components communicate only through approved protocols and specific ports, which limits the potential damage from any single security incident.

*Caption: Watch this video to understand the fundamentals of AWS VPC and how it serves as the foundation of your cloud network security.*Actionable Tips for Building a Secure VPC

Building a secure and manageable network requires moving past default settings. You must apply deliberate controls to every layer of the VPC. Incorporate these strategies into your daily network operations:

- Prioritize Security Groups (Instance-Level Stateful Firewall): Use security groups as the first line of defense for individual instances. Because they are stateful, if you allow an inbound request, the return traffic is automatically permitted regardless of outbound rules. Start with a "default deny" policy. Explicitly allow only the ports and source IP ranges or specific Security Groups that your application needs to function. Always evaluate whether an instance truly requires a public IP before making a change.

- Use Descriptive Naming: Assign clear, functional names to your security groups and their associated rules. Examples include

sg-webapp-allow-https-from-alborsg-database-allow-mysql-from-app-sg. This practice makes audits much faster and simplifies the troubleshooting process. It ensures that any team member can understand the intent of a rule without having to map out every IP address or port manually during an investigation. - Leverage VPC Flow Logs for Visibility: Turn on VPC Flow Logs to record information about the IP traffic moving through your network interfaces. You can send these logs to Amazon CloudWatch Logs or Amazon S3. Analyzing this data with Amazon Athena helps you spot unusual traffic patterns, debug connectivity failures, and perform forensic investigations after a security event.

- Implement VPC Endpoints for Private Connectivity: Configure VPC endpoints for services like S3 and DynamoDB. Gateway endpoints for these services keep your data traffic within the AWS internal network. For other services, Interface Endpoints powered by PrivateLink provide a private IP address from your subnet. This improves security by removing the need for internet gateways or NAT devices for service communication, which also lowers data transfer costs.

- Audit Rules Regularly (Crucial for Compliance): Perform periodic reviews of all security group and NACL rules to remove any entries that are no longer in use or are too broad. This regular maintenance helps prevent configuration drift, where small changes over time create security gaps. Regular audits are a standard requirement for maintaining high security standards and passing external regulatory inspections.

Developing a multi-layered network defense is a vital skill for anyone working in AWS architecture or security. To see a detailed breakdown of these different tools, check out the various network security components in AWS.

Reflection Prompt:

Imagine a web application with a public load balancer, app servers in a private subnet, and a database in a second private subnet. Sketch the Security Group and NACL rules required to maintain a least-privilege network. How would you configure the outbound rules for the NACL compared to the Security Group?

5. Encrypt Data at Rest Using AWS KMS and Encryption Services

Encrypting data at rest is a requirement for AWS security and a mandate for many compliance frameworks. This process involves encoding data stored in services like Amazon S3, Amazon RDS, and Amazon EBS. It renders data unreadable to unauthorized users who might gain access to the underlying storage hardware or logic. AWS Key Management Service (KMS) acts as the control center for this process. It provides a managed service to create, control, and audit the cryptographic keys that protect your data across the AWS environment.

Why Encryption at Rest is a Compliance and Security Imperative

Compliance standards like HIPAA, PCI DSS, GDPR, and FedRAMP mandate encryption at rest to protect sensitive information from theft. If an attacker bypasses other security layers to access storage media—such as an exposed EBS snapshot or an S3 bucket with public access—encryption serves as the final layer of protection. This ensures data stays confidential. For example, healthcare providers use KMS to meet HIPAA requirements for Protected Health Information (PHI). Financial institutions often use AWS CloudHSM with KMS to achieve FIPS 140-2 Level 3 compliance for high-assurance workloads. This topic is frequent in security architectural reviews and audits.

This practice shifts security from a reliance on perimeter access controls to a model where protection exists within the data itself. Even if a snapshot or backup is exposed, the data remains secure without the specific decryption key. Mastering this distinction is vital for the current AWS Certified Security – Specialty (SCS-C03) exam.

Actionable Tips for Robust Data-at-Rest Encryption

To implement encryption at rest, you need a strategy that goes beyond checking a box. Use these specific tactics in your security operations:

- Enforce Universal Default Encryption: Enable default encryption on all new S3 buckets, EBS volumes, and RDS instances. This creates a secure baseline to ensure no data is stored in plaintext, even if a developer misses a setting. Use AWS Config rules to monitor and flag any non-compliant resources across your accounts automatically.

- Choose the Right Key Type: Use AWS-managed keys for simplicity when AWS handles the key lifecycle for you. For greater control over key policies and auditability, use customer-managed keys (CMKs). CMKs allow you to define granular IAM policies on the key, specifying exactly who can use it and for what purpose. This choice is a standard part of architectural design reviews when balancing ease of use with administrative oversight.

- Automate Key Rotation: Enable automatic annual key rotation for your CMKs in the KMS console. This practice limits the impact of a compromised key by generating new cryptographic material every year. KMS keeps older versions available to decrypt existing data without requiring changes to your application code or manual re-encryption.

- Add Encryption Context: Use encryption context when making API calls to KMS. This feature adds authenticated data in the form of key-value pairs that must match during decryption. It prevents keys from being used across different applications or data types, providing an extra layer of validation. The context is also logged in CloudTrail, providing a clear audit trail of key usage.

- Monitor and Audit Key Activity: Set up CloudWatch alarms and CloudTrail logging for all KMS activity. This helps you detect suspicious behavior, such as unauthorized decryption requests, high API call volume, or attempts to delete a key. Immediate alerts for KMS "ScheduleKeyDeletion" events are particularly useful for preventing accidental or malicious data loss.

Reflection Prompt:

If you had to design a solution for a healthcare application storing sensitive patient data in an S3 bucket and an RDS database, what key management strategy (AWS-managed keys vs. CMKs) would you recommend, and why?

6. Enable Encryption in Transit Using TLS/SSL

Securing data in transit is a required practice in AWS security. This involves using Transport Layer Security (TLS)—the successor to Secure Sockets Layer (SSL)—to create an encrypted channel for data. This applies to data moving between a client and a server or between different services inside and outside the AWS environment. By encrypting these communications, you shield sensitive information from eavesdropping and man-in-the-middle (MITM) attacks. This maintains data integrity and confidentiality during every step of transmission.

Why Encryption in Transit is Critical for Data Protection

Unencrypted network data is vulnerable. It is like a postcard: any observer can read the content during delivery. In a cloud environment, data travels across various networks including the public internet, the AWS backbone, and private VPC networks. This movement increases the attack surface. Implementing encryption in transit is vital for protecting sensitive API calls, user sessions, and critical service-to-service communication. For instance, an e-commerce platform processing payments must use HTTPS (HTTP over TLS) to protect credit card numbers and personally identifiable information (PII). Capturing unencrypted PII can lead to catastrophic data breaches.

Even within a private VPC, microservices should communicate using TLS via an Application Load Balancer. This builds a deeper layer of defense by ensuring that internal traffic is not visible if a single component is compromised. AWS enforces TLS for all its API endpoints by default, which sets a high security standard for any interaction with the platform. This practice also satisfies requirements for major compliance frameworks. PCI DSS, HIPAA, GDPR, and ISO 27001 all mandate encryption for data in transit. Failing to meet these standards can result in expensive penalties, loss of brand reputation, and a permanent drop in customer trust.

Actionable Tips for Effective In-Transit Encryption

To implement strong encryption in transit, integrate these specific actions into your security protocols:

- Enforce Secure Protocols at the Edge: Start by configuring your public-facing entry points. Elastic Load Balancing (ELB), including Application Load Balancers and Network Load Balancers, should be set to accept only HTTPS traffic. Do the same for Amazon CloudFront distributions. Always implement "HTTP to HTTPS redirect" rules. This ensures that even if a user tries to connect via an insecure channel, the system automatically switches them to a secure one.

- Manage Certificates Centrally with ACM: Use AWS Certificate Manager (ACM) to provision, manage, and deploy your public and private TLS certificates. ACM handles the heavy lifting of automatic certificate renewals. This is a significant operational benefit because it removes the risk of sudden service outages caused by expired certificates. Centrally managing your certificates in ACM provides better visibility into your overall security posture.

- Strengthen Your TLS Configuration (Security Policies): You must actively disable outdated and vulnerable versions of TLS, such as TLS 1.0 and 1.1. In your ALB Listener settings or CloudFront Viewer Protocol Policies, choose a security policy that enforces a minimum of TLS 1.2. Ensure the policy includes strong, modern cipher suites. This step is vital to prevent downgrade attacks where an attacker forces a connection to use a weaker, crackable encryption method.

- Force Browser-Side Encryption with HSTS: Implement HTTP Strict Transport Security (HSTS) headers in your web application responses. An HSTS header instructs compliant browsers to communicate with your domain exclusively over HTTPS for a defined period. This applies even if a user manually types "http://" into the address bar or clicks an old HTTP link. It effectively eliminates the window of opportunity for protocol downgrade attacks.

- Verify Your Configuration Regularly: Do not assume your settings are correct forever. Use external tools like the Qualys SSL Labs Server Test or run internal vulnerability scanners to check your public endpoints. These tools provide a technical report on your TLS implementation. They highlight weaknesses such as weak ciphers or legacy protocol support that you might have missed. Make these scans a standard part of your continuous security auditing process.

Reflection Prompt:

You're deploying a new web application. What specific AWS services and configurations would you use to ensure all user traffic is encrypted in transit from their browser to your application servers, and why?

7. Implement Security Groups and NACLs for Defense-in-Depth

Defense-in-depth is a security strategy that uses multiple independent layers to safeguard your resources. In AWS, you should never rely on a single firewall to protect your data. Instead, combine Security Groups, which are stateful, instance-level virtual firewalls, with Network Access Control Lists (NACLs), which are stateless, subnet-level virtual firewalls. This creates overlapping layers of network traffic filtering. If one layer is misconfigured or fails, another remains in place to provide protection. This strategy is central to AWS best practices security.

This layered approach builds a resilient security posture. It ensures that a single configuration mistake or a point of failure does not result in a total breach. By creating these redundant checkpoints, you increase the cost and difficulty for an attacker to compromise your infrastructure. They are forced to bypass multiple distinct security mechanisms rather than a single control. Adopting this mentality is necessary for maintaining long-term safety in cloud environments.

Why Layered Network Defense is Crucial for Architects and Engineers

Architects and engineers use layered defense to build compliant and resilient architectures. Security Groups act as the first line of defense at the instance or Elastic Network Interface (ENI) level. They are stateful, so if you allow outbound traffic, the corresponding inbound response is automatically permitted. NACLs provide a second line of defense at the subnet boundary. They are stateless, requiring explicit rules for both inbound and outbound traffic, regardless of the Security Group rules. This distinction is a frequent topic in AWS certification exams, particularly the AWS Certified Security – Specialty.

For example, a financial services company might use a Security Group to allow inbound web traffic on port 443 to its application servers. At the same time, they would configure a NACL on the public subnet to explicitly deny all inbound traffic except for port 443. They might also deny outbound traffic from specific private ranges to the public internet. This blocks reconnaissance scans and unauthorized traffic before it reaches your instances. This dual-layer approach is often required for meeting compliance standards like PCI DSS and SOC 2. Understanding the interaction between these layers is vital for managing enterprise environments where Security Groups focus on application requirements and NACLs apply network-level policies.

Actionable Tips for Implementing Defense-in-Depth Network Controls

To implement defense-in-depth, treat each layer as a distinct control and understand their differences:

- Layer 1 - Security Groups (Stateful, Instance-Level): Start with a "deny all" default posture and only create specific "allow" rules for necessary traffic. Reference other Security Groups or specific IP addresses for source and destination instead of using

0.0.0.0/0unless it is a public server on port 443. For instance, you should limit SSH access on port 22 so it only accepts traffic from a specific Bastion host IP or the Bastion host's Security Group. - Layer 2 - NACLs (Stateless, Subnet-Level): Use NACLs for broad filtering at the subnet perimeter. They process rules in order from lowest to highest. A common practice is to block known malicious IP addresses or deny entire port ranges, such as inbound UDP ports, that should not be accessed from the internet. Since NACLs are stateless, remember to allow both inbound and outbound return traffic for legitimate connections.

- Monitor and Validate with VPC Flow Logs: Use VPC Flow Logs to monitor accepted and rejected traffic from both Security Groups and NACLs. This provides visibility into potential threats and helps validate that your rules are working as intended. It also assists in troubleshooting connectivity issues when legitimate traffic is being blocked by a rule you forgot to update.

- Document Each Layer's Purpose: Maintain clear documentation that explains the role of each Security Group and NACL rule. This is essential for troubleshooting, facilitating compliance audits, and preventing security gaps during future configuration changes. Clear labeling and naming conventions within the AWS console can also help teams identify the purpose of a rule at a glance.

Building a multi-layered network defense is a vital skill for AWS professionals. Solutions Architects use these tools to design secure environments, while DevOps Engineers focus on their implementation and automation. For a more detailed breakdown of these components, you can explore the various network security components in AWS.

Reflection Prompt:

Explain the key differences between Security Groups and NACLs, and provide a scenario where a NACL would catch traffic that a Security Group might miss because of a misconfiguration.

8. Enable AWS Config for Compliance and Configuration Management

Maintaining a stable and secure configuration in a fluid cloud environment is a significant challenge. AWS Config serves as a resource configuration historian and compliance engine to solve this. It continuously monitors and records your AWS resource configurations, letting you evaluate how your resources are set up against desired policies. This provides a clear path to following AWS security best practices.

Why AWS Config is Essential for Governance and Auditing

AWS Config provides the visibility and control needed to enforce internal policies and meet external regulatory requirements. It answers critical questions for auditors and security teams: "What did my production security group look like last Tuesday?" or "Are all my S3 buckets preventing public access and enforcing encryption?" For example, a financial services company can use AWS Config to automatically verify that all Amazon RDS databases have encryption enabled at rest, flagging noncompliant resources for immediate attention. Healthcare organizations can use it to ensure EC2 security group configurations align with HIPAA controls, providing a record of compliance over time.

This continuous oversight helps prevent configuration drift, where systems move away from their secure baseline. Drift is a common cause of security vulnerabilities and operational issues. Understanding AWS Config's capabilities is necessary for the AWS Certified Security – Specialty and AWS Certified Solutions Architect – Professional exams.

Actionable Tips for Advanced AWS Config Implementation

To move from basic monitoring to proactive compliance enforcement, integrate these strategies into your security operations:

- Start with Managed Rules, Then Customize: Begin by deploying AWS managed Config rules. These pre-built rules cover common security best practices, such as checking for open inbound SSH/RDP traffic or ensuring MFA is active for the root account. These offer immediate value with low effort. Once you are comfortable, create custom Config rules using AWS Lambda for specific organizational policies.

- Automate Remediation Carefully and Incrementally: Use AWS Systems Manager Automation documents or AWS Lambda functions triggered by EventBridge to fix noncompliant resources automatically. Start with low-risk fixes, such as re-enabling logging on a CloudTrail trail or applying a default S3 bucket policy, before automating more critical changes. Always test these automations in a sandbox environment before applying them to production.

- Centralize Compliance Views with Aggregators: In environments with many accounts, use a Config Aggregator to collect configuration and compliance data from all member accounts into a single management account. This provides a unified dashboard and reporting mechanism for a view of your entire organization’s compliance posture without logging into each account separately.

- Integrate for Real-Time Alerts and Workflows: Connect AWS Config to Amazon EventBridge to trigger real-time notifications via SNS or start automated workflows whenever a resource becomes noncompliant. This ensures security teams can respond to configuration changes that violate policy immediately, reducing the window of vulnerability in your cloud environment.

Reflection Prompt:

How would AWS Config help a DevOps team maintain a consistent security posture across development, staging, and production environments, and what types of non-compliance would it most effectively detect?

9. Use AWS Secrets Manager for Credential and Secret Rotation

Hardcoding sensitive information like database passwords, API keys, or OAuth tokens directly into application source code or configuration files is a high-stakes security risk. Storing secrets in plain text often leads to accidental exposure through version control systems or insecure backups. AWS Secrets Manager provides a centralized service to store, manage, and rotate these credentials throughout their lifecycle. This approach is a core part of effective AWS best practices security because it decouples sensitive data from your application logic. It reduces the overall attack surface and ensures that even if source code is leaked, the secrets remain protected behind specific identity permissions.

Caption: AWS Secrets Manager centralizes the management and automated rotation of sensitive credentials, enhancing overall security.

Caption: AWS Secrets Manager centralizes the management and automated rotation of sensitive credentials, enhancing overall security.

Why Secrets Manager is Crucial for Secure Application Development

Centralizing secret management gives you clear visibility into who accesses credentials and when they are used. Instead of embedding a database password in a configuration file, an application uses its assigned IAM role to fetch the secret from the service at runtime. This model supports strong security habits, such as rotating credentials without redeploying code or manually updating server settings. This provides a major operational advantage for DevOps teams. Organizations like Netflix use these capabilities to rotate database credentials as often as every hour. This strategy limits the window of opportunity for an attacker if a credential is ever stolen. Services such as Amazon RDS, Amazon Redshift, and Amazon DocumentDB connect directly with Secrets Manager to handle password updates automatically, which removes manual work.

This programmatic retrieval and frequent rotation align with the Principle of Least Privilege and Zero Trust security models. Applications receive only the credentials they need exactly when they need them. Understanding these workflows is essential for the AWS Certified DevOps Engineer – Professional and the AWS Certified Security – Specialty exams.

Actionable Tips for Integrating Secrets Manager

To use Secrets Manager as part of your security strategy, move beyond simple storage and adopt these active management techniques:

- Implement Automated Rotation Schedules: Set up automated rotation for supported services like RDS or Redshift. For other systems, use a Lambda function to trigger the update. Configuring a rotation interval between 30 and 90 days helps minimize the lifespan of any single credential. This practice satisfies common compliance requirements and limits the potential damage from a leaked key.

- Use Fine-Grained IAM Policies for Access: Write specific IAM policies that restrict applications to only the secrets they need to function. Do not use wildcard characters (

*) for thesecretsmanager:GetSecretValueaction. Instead, specify the exact ARN of the secret. For example:arn:aws:secretsmanager:REGION:ACCOUNT_ID:secret:MyDatabaseSecret-XXXXXX. - Monitor for Anomalies and Access Patterns: Review CloudTrail logs to identify failed retrieval attempts or unusual activity. A sudden increase in failed

secretsmanager:GetSecretValuerequests may signal a misconfigured application or a brute-force attempt. Set up CloudWatch Alarms to notify security teams when these events occur. - Rotate Secrets On-Demand When Necessary: Do not wait for the schedule if a security event occurs. Manually trigger a rotation whenever a developer with access leaves the company or if you suspect a system compromise. This ensures that old credentials become useless immediately.

- Use Client-Side Caching for Performance: For high-traffic applications, use the AWS client-side caching libraries. Caching reduces the frequency of API calls to the Secrets Manager service. This improves application latency and keeps costs lower while ensuring the application can fetch the latest secret version when the cache expires.

Reflection Prompt:

Beyond database credentials, what other types of sensitive information (e.g., API keys, OAuth tokens) could benefit from being managed and rotated via AWS Secrets Manager in a typical application?

10. Enable AWS GuardDuty for Intelligent Threat Detection

AWS GuardDuty functions as a threat detection service that continuously monitors your AWS accounts and workloads for malicious activity and unauthorized behavior. Unlike traditional tools that rely on static rules, GuardDuty uses machine learning, anomaly detection, and integrated threat intelligence feeds to identify risks in near real-time. This proactive method provides an automated security analyst for your cloud environment, identifying threats that might otherwise go unnoticed.

Why GuardDuty is Your Cloud Security Analyst

Human security teams cannot manually analyze the massive volume of logs generated by AWS CloudTrail, VPC Flow Logs, and DNS query logs across many accounts. At scale, the data is simply too large to parse. GuardDuty automates this scanning process to uncover a wide range of issues, including:

- Reconnaissance: Attackers scanning for open ports or making unauthorized API calls to map your network layout.

- Compromised EC2 instances: Detecting instances that serve malware, perform port scanning, or communicate with known command-and-control servers.

- Unauthorized data access: Flagging unusual S3 bucket access patterns from unknown locations or via compromised credentials.

- Cryptocurrency mining: Identifying unauthorized mining software running on your compute resources.

For example, a financial services firm can use GuardDuty to catch potential internal threats. If an employee suddenly downloads large amounts of data from a sensitive S3 bucket at 3:00 AM from an unfamiliar IP address, GuardDuty generates an alert. This capability changes a difficult "needle-in-a-haystack" search into an immediate, actionable notification. The service provides high-fidelity alerts with context without requiring you to manage security software or update threat lists manually. Understanding these detection patterns is a major component of the AWS Certified Security – Specialty exam.

Actionable Tips for Maximizing GuardDuty's Value

To maximize the value of GuardDuty, go beyond simple activation and integrate it deeply into your security operations:

- Centralize and Automate Enablement Across Accounts: Use AWS Organizations to turn on GuardDuty across every account in your organization. You can manage this from a single management account to ensure total threat detection coverage across your entire footprint. Designate a specific "security tooling" account to aggregate findings into one location, ensuring that no account is left unmonitored.

- Enable in All Regions (Even Unused Ones): Activate GuardDuty in every AWS region, including those where you do not have active workloads. Attackers often deploy resources like cryptominers in quiet or forgotten regions to avoid detection by administrators. Monitoring these areas ensures that unauthorized resource deployment is flagged regardless of the location.

- Automate Remediation for Rapid Response: Use Amazon EventBridge to start AWS Lambda functions in response to specific GuardDuty findings. If GuardDuty detects a malicious IP communicating with an EC2 instance, a Lambda function can automatically update a security group to block that IP. It can also isolate the compromised instance for forensic analysis, moving your posture from passive detection to active defense.

- Integrate and Correlate Findings: Forward GuardDuty findings to AWS Security Hub or a third-party SIEM system. This allows you to see GuardDuty alerts alongside data from web application firewalls and network logs. A single view of all security alerts reduces fatigue for your team and speeds up the incident response process.

- Customize and Refine Trusted IP Lists: Keep your trusted IP lists current, including corporate VPN ranges and known internal testing tools. By updating these lists and your custom threat intelligence feeds, you help GuardDuty ignore safe activity. This improves the signal-to-noise ratio and allows your security team to focus on the most genuine, high-priority threats.

Reflection Prompt:

If GuardDuty detects an EC2 instance communicating with a known command-and-control server, what immediate automated and manual steps would you take as a security engineer?

AWS Security Best Practices — 10-Point Comparison for IT Professionals

Review the comparison below to evaluate implementation steps and specific benefits for each AWS security control. This table serves as a reference for your cloud security planning or when preparing for the SCS-C03 AWS Certified Security - Specialty exam.

| Control | Implementation Complexity | Resource Requirements | Expected Outcomes | Ideal Use Cases | Key Advantages |

|---|---|---|---|---|---|

| Identity and Access Management (IAM) - Principle of Least Privilege | Medium | IAM policies, RBAC design, auditing via Access Analyzer, and ongoing administrative time. | Tightened privilege sets and a smaller "blast radius" if a security breach occurs. | Large organizations, microservices architectures, and highly regulated cloud environments. | Shrinks the total attack surface and improves auditability while enhancing compliance. |

| Enable Multi-Factor Authentication (MFA) for Root and Privileged Accounts | Easy | MFA hardware or software tokens, reliable backup and recovery processes, and user support. | Dramatically lower risk of account takeovers resulting from compromised login credentials. | AWS Root account, high-privilege IAM users, and production environment access roles. | Offers highly effective protection against password-based attacks and credential compromise. |

| Enable CloudTrail for Full-Scope Audit Logging | Easy | S3 storage buckets, log analysis tools such as CloudWatch Logs or Athena, and retention planning. | Full API and activity audit trails for deep forensic analysis and compliance reporting requirements. | Compliance audits like PCI or HIPAA, incident response, and multi-account environment setups. | Acts as the definitive source of truth for AWS activity with solid forensic capability. |

| Implement VPC Security with Network Access Controls | Medium | Network engineers, Security Groups, NACLs, VPC Flow Logs, and detailed subnet design. | Clear network segmentation that prevents lateral movement by attackers within the cloud. | Handling sensitive workloads, HIPAA/PCI compliance, and private service-to-service communication. | Provides fine-grained traffic control, network isolation, and deep security layers. |

| Encrypt Data at Rest Using AWS KMS and Encryption Services | Medium | KMS keys and policies, potential CloudHSM, key rotation schedules, and monitoring. | Strong data confidentiality and preparation for strict compliance audits like GDPR and HIPAA. | RDS and DynamoDB databases, S3 buckets, EBS volumes, and regulated data stores. | Offers centralized key management and clear audit trails for key usage and protection. |

| Enable Encryption in Transit Using TLS/SSL | Easy | TLS certificates via ACM, secure TLS configurations for ELB or CloudFront, and renewal automation. | Prevention of data eavesdropping and Man-in-the-Middle (MITM) attacks during transmission. | Public web applications, APIs, and internal service-to-service communication. | Employs industry-standard protection and meets the vast majority of compliance mandates. |

| Implement Security Groups and NACLs for Defense-in-Depth | Medium | Continuous rule management, technical documentation, and monitoring via VPC Flow Logs. | Layered network defense that eliminates single points of failure in the security stack. | Complex multi-tier architectures and compliance-driven cloud environments. | Provides redundant filtering mechanisms and catches misconfigurations to improve resilience. |

| Enable AWS Config for Compliance and Configuration Management | Medium | Managed or custom Config rules, aggregators, remediation via Lambda, and storage fees. | Visibility into compliance status, historical configuration tracking, and automated drift detection. | Multi-account compliance management, auditing, change tracking, and automated cloud governance. | Automates compliance checks and provides proactive identification of security posture deviations. |

| Use AWS Secrets Manager for Credential and Secret Rotation | Medium | Secrets Manager service, application integration, and custom rotation logic via AWS Lambda. | Elimination of hardcoded credentials along with automatic rotation and improved auditability. | Database credentials, API keys, OAuth tokens, and CI/CD pipeline secrets. | Secure storage and retrieval combined with automatic credential lifecycle management and audit logs. |

| Enable AWS GuardDuty for Intelligent Threat Detection | Easy | Monitoring fees, SIEM or EventBridge integration, and security analyst review or triage. | Early detection of malicious activity and anomaly identification across various AWS services. | Active threat hunting, automated anomaly detection, and incident response workflows. | Features findings driven by machine learning with low initial setup overhead and updated intelligence. |

From Theory to Practice: Embedding Security into Your AWS DNA

Moving through the vast AWS environment can be difficult, but hardening your cloud environment does not need to be an impossible task. For IT professionals, the transition from learning security concepts to implementing them is a process of steady improvement and constant vigilance. This guide has broken down ten foundational pillars that create a strong security posture, turning abstract ideas into specific, actionable steps. Gaining proficiency in these AWS best practices security protocols is not about checking boxes for a compliance auditor. Instead, it involves building a resilient infrastructure that is secure by design, capable of protecting applications and data against modern threats.

The central theme across these practices—from enforcing the Principle of Least Privilege in IAM to using GuardDuty for automated threat detection—is a shift from a reactive to a proactive mindset. This strategy focuses on creating defense layers that anticipate and stop threats before they can spread through the network. Visualize this as a secure facility where every part, from the external perimeter (VPCs and NACLs) to the internal storage (data encryption with KMS), is independently hardened and monitored. This focus on layered security is a primary component of current AWS certification exams, such as the AWS Certified Cloud Practitioner (CLF-C02) and the AWS Certified Security - Specialty (SCS-C03).

Key Takeaways for Building a Resilient Cloud Posture

To turn these practices into immediate operational priorities, focus on three specific areas: Access Control, Data Protection, and Continuous Monitoring.

- Mastering Access Control: The first line of defense is controlling identity and access. This begins with the strict application of the Principle of Least Privilege for all IAM roles, groups, and users. You should use IAM Policy Simulator to test permissions and ensure no unnecessary access is granted. Enforce multi-factor authentication (MFA) on all accounts, especially those with administrative rights, using hardware tokens or virtual MFA apps. Securely handle application credentials by using AWS Secrets Manager rather than hardcoding strings in your source code. Identity failures remain the primary cause of cloud data breaches.

- Data Protection Mechanisms: Protecting data requires a strategy for both static and moving information. Encrypt data at rest by using the AWS Key Management Service (KMS) for S3 buckets, EBS volumes, and RDS instances. Using Customer Managed Keys (CMKs) gives you more control over key rotation and access policies than default AWS-managed keys. For data in transit, ensure all endpoints use TLS 1.2 or higher. This prevents unauthorized users from intercepting or modifying data as it travels between your users and the AWS network or between internal services.

- Automated Monitoring and Logging: Visibility is the only way to ensure your security controls are working. Services like CloudTrail, AWS Config, and GuardDuty provide the necessary oversight. CloudTrail records every API call, documenting who performed an action and when they did it, which is vital for forensic investigations. AWS Config tracks resource changes and alerts you if a configuration drifts away from your defined security baseline. GuardDuty operates as an automated analyst, scanning VPC Flow Logs, DNS logs, and CloudTrail events to identify malicious activity, such as unauthorized crypto-mining or communication with known command-and-control servers.

Your Actionable Next Steps: A Self-Audit Checklist

Securing an AWS environment is a continuous operational cycle rather than a project with a fixed end date. Begin by performing a detailed audit of your current setup against the ten practices found in this article. Use this checklist to guide your initial review:

- Review IAM Policies: Look at your most privileged roles first. Are permissions restricted to specific resource ARNs rather than using wildcards? Check if

Conditionblocks are used to restrict access based on IP address or time of day. Ensure MFA is active for every human user. - Verify Encryption Settings: Inspect your S3 buckets to confirm that "Block Public Access" is enabled and default encryption is active. Check EBS volumes and RDS snapshots to ensure they are encrypted. Update your account settings to enforce EBS encryption for all new volumes created in the region.

- Audit Network Controls: Review Security Group rules to find any that allow

0.0.0.0/0on management ports like 22 (SSH) or 3389 (RDP). Replace these with specific CIDR blocks. Ensure Network Access Control Lists (NACLs) provide a secondary layer of protection for your subnets. - Confirm Logging and Monitoring: Verify that CloudTrail is active in all regions, not just the ones you currently use. Confirm that GuardDuty is enabled and that alerts are sent to an SNS topic or an email address that your security team monitors daily.

- Secrets Management Audit: Identify any credentials stored in environment variables or configuration files. Move these to AWS Secrets Manager or AWS Systems Manager Parameter Store. Configure Secrets Manager to rotate passwords for RDS databases automatically every 30 to 90 days.

By following these steps, you reduce the available attack surface and help create an engineering culture where security is part of the development lifecycle. This dedication protects your organization from data loss and financial penalties while building confidence with your customers. The goal is to make security a tool that supports growth, allowing your developers to build and deploy applications on AWS with speed and safety.

Are you ready to turn this technical knowledge into a career-defining certification? The security concepts covered here are essential for passing your exams and proving your expertise in the field. MindMesh Academy provides detailed, practical courses to help you master AWS best practices security and prepare for your next certification. Visit MindMesh Academy to see our current training options and begin building a more secure cloud environment. Your path to becoming a certified AWS security professional starts with these practical steps.

Ready to Get Certified?

Prepare for the current exam using expert study materials at MindMesh Academy:

Written by

Alvin Varughese

Founder, MindMesh Academy

Alvin Varughese is the founder of MindMesh Academy and holds 15 professional certifications including AWS Solutions Architect Professional, Azure DevOps Engineer Expert, and ITIL 4. He's held senior engineering and architecture roles at Humana (Fortune 50) and GE Appliances. He built MindMesh Academy to share the study methods and first-principles approach that helped him pass each exam.